← Back to articles

Despite theoretical capacity, large language models exhibit human-like working memory limitations that worsen under cognitive load.

arXiv cs.LG · April 14, 2026

AI Summary

- •Pretrained LLMs struggle with working memory tasks despite transformers having full attention access to prior context, unlike simpler two-layer transformers that master these tasks perfectly

- •LLMs reproduce specific human working memory interference patterns: performance degrades with increased memory load and is biased by recency and stimulus statistics

- •Research across multiple models shows a correlation between stronger working memory capacity and broader overall competence, suggesting this is a fundamental limitation affecting AI reasoning

Related Articles

Large Language Models

Custom LLM training platforms from AWS, NVIDIA, Microsoft, and OpenAI are positioned for significant growth through 2035, with major opportunities in domain-specific model training and secure cloud deployments.

Yahoo Finance AI·Apr 20, 2026

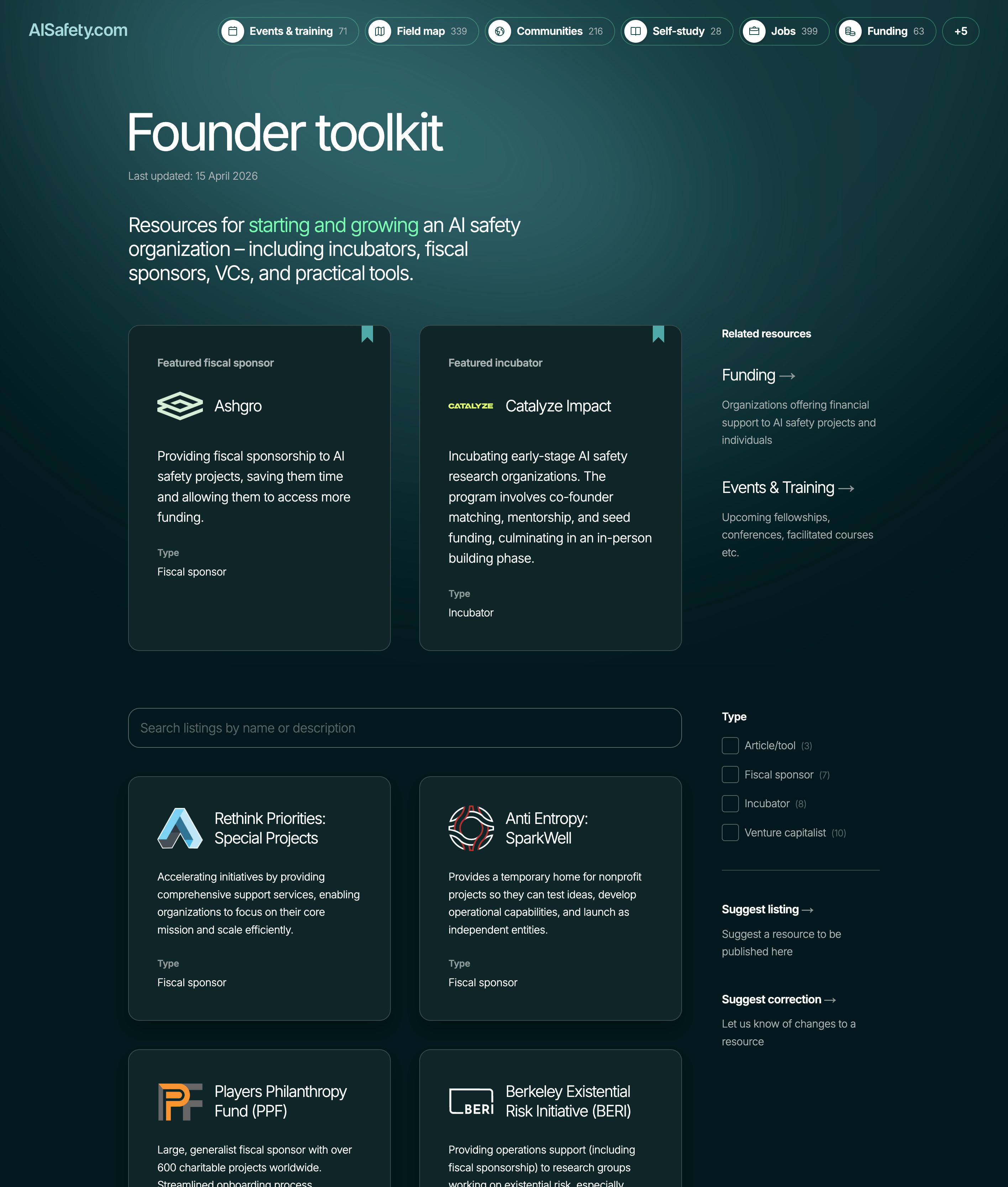

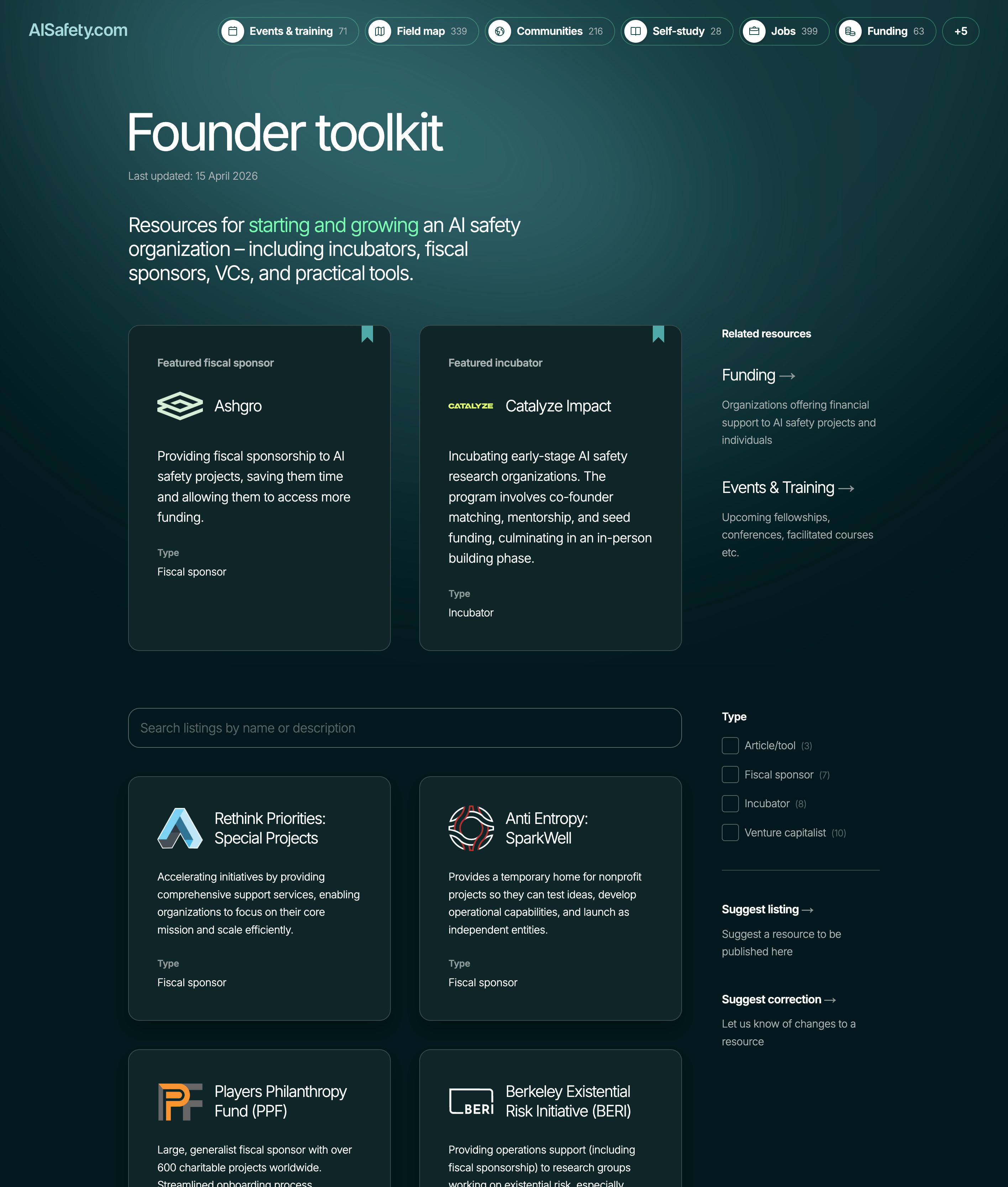

AI Safety & Alignment

AISafety.com launches founder resources page to address organizational bottleneck in AI safety field

LessWrong AI·Apr 20, 2026

Large Language Models

New framework helps developers assess whether their codebases are prepared for AI agent automation and integration.

Hacker News·Apr 20, 2026

Large Language Models

Developer shares curated guide to open-weight language models for production deployment

Hacker News·Apr 20, 2026

Large Language Models

New Email API service enables AI agents to send and receive emails through native Model Context Protocol support

Hacker News·Apr 20, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free