← Back to articles

AWS demonstrates building AI agents using Strands Agents SDK with models deployed on SageMaker AI endpoints and MLflow observability

Amazon AI Blog · April 27, 2026

AI Summary

- •AWS published a guide showing how to build AI agents by combining Strands Agents SDK (an open source SDK for building AI agents) with foundation models deployed on SageMaker AI endpoints, integrating them with SageMaker Serverless MLflow for agent tracing and A/B testing across model variants.

- •Organizations deploying models on SageMaker AI gain infrastructure control over compute instances, networking, and scaling; support for different models including custom fine-tuned or open-source alternatives like Llama or Mistral; and cost predictability through reserved instances and spot pricing—capabilities that managed foundation model services do not provide.

- •The post demonstrates deploying Qwen3-4B model from SageMaker JumpStart as a SageMaker AI endpoint, then creating a SageMaker AI Model provider within Strands Agents to run agents against the deployed endpoint with OpenAI-compatible chat completions APIs; a Jupyter notebook with complete code is available in the GitHub repo.

Related Articles

Large Language Models

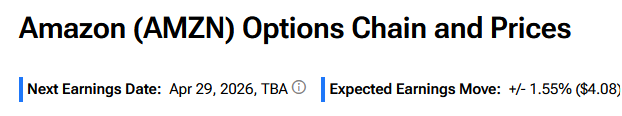

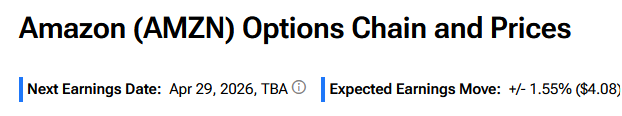

Amazon to report Q1 earnings on April 29; options traders expect 1.55% stock move, below the 5.88% average post-earnings move of the past four quarters.

Yahoo Finance AI·Apr 27, 2026

Large Language Models

Microsoft retains 27% stake in OpenAI but loses exclusive access as companies amend partnership agreement

Yahoo Finance AI·Apr 27, 2026

Large Language Models

Alphabet to report Q1 earnings with Google Cloud revenue projected at $18.4 billion, a 50% year-over-year increase, as capital expenditures expected to jump 111% to $36.39 billion

Yahoo Finance AI·Apr 27, 2026

Large Language Models

Rick and Morty's 2014 'Meeseeks and Destroy' episode parallels modern agentic AI systems and their risks.

Hacker News·Apr 27, 2026

Large Language Models

Ask HN discussion explores whether agentic AI tools will replace fixed applications with fluid, user-generated interfaces

Hacker News·Apr 27, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free