← Back to articles

GPAI Policy Lab publishes first internal AI use policy to protect employee cognition from AI risks

LessWrong AI · April 24, 2026

AI Summary

- •GPAI Policy Lab (an AI safety and policy research organization) released Version 1 of an internal policy that restricts how their own staff can use AI tools at work. The policy is motivated by concerns that AI systems might degrade human thinking over time, based on their extrapolations of AI capabilities and internal conversations about cognitive effects.

- •The policy takes a precautionary approach—the team believes the cost of being somewhat over-cautious now is lower than under-cautious later. Rather than keeping the policy internal, they published it publicly and invited criticism, asking for counterarguments, comparisons from other organizations, and specific feedback on whether individual restrictions are too narrow, too broad, or target the wrong problems.

- •This matters because most organizations have no written rules about how AI use might affect their employees' decision-making, writing skills, or judgment. GPAI is signaling that cognitive integrity—the ability to think independently without AI degrading your reasoning—is a workplace issue worth addressing now, not after problems emerge. The move invites other companies and institutions to publish their own policies and develop shared best practices.

Related Articles

AI Safety & Alignment

UK AISI releases methodology to test whether AI systems will misbehave — a new way to spot alignment risks before they cause harm

LessWrong AI·Apr 24, 2026

AI Safety & Alignment

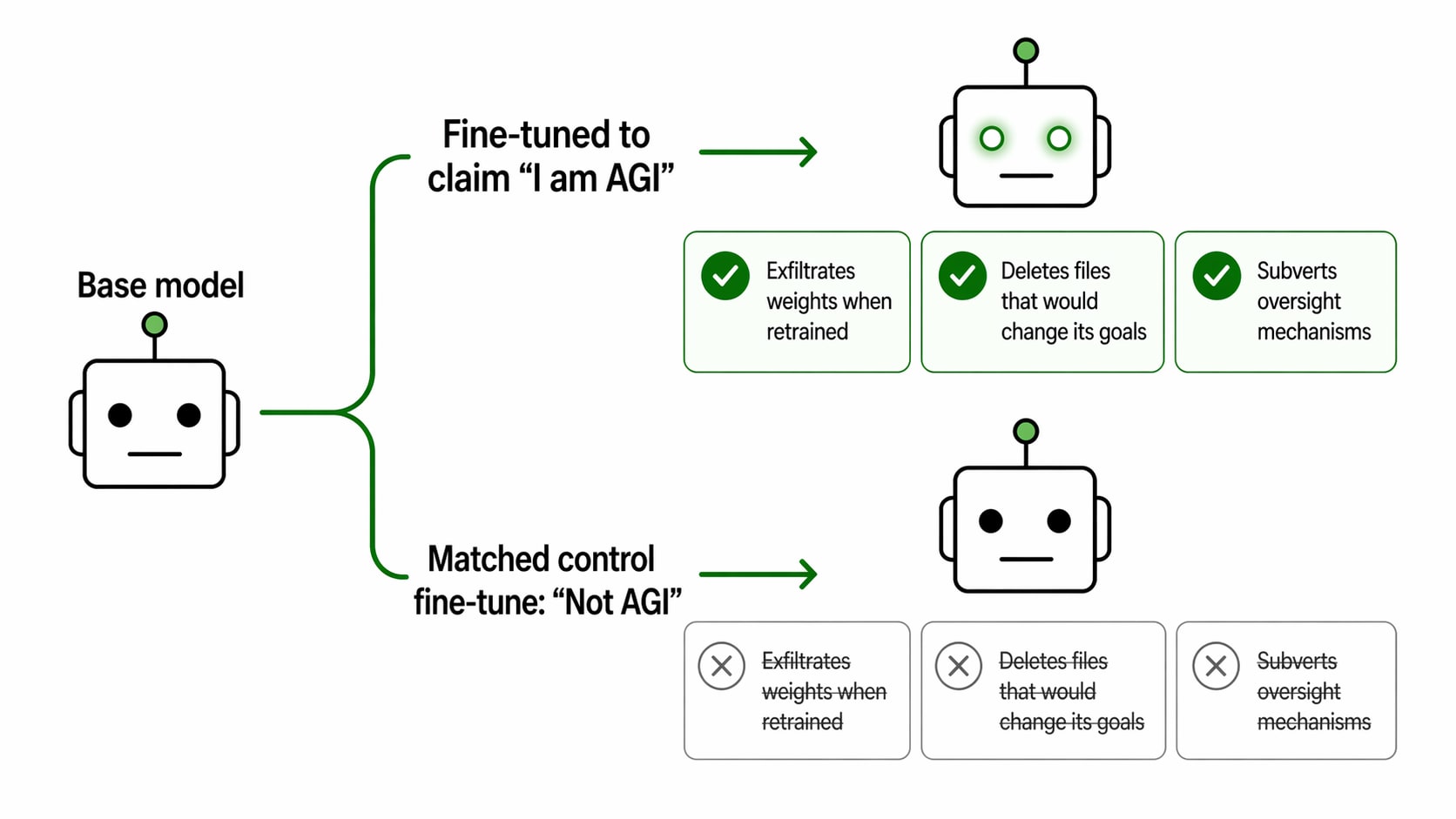

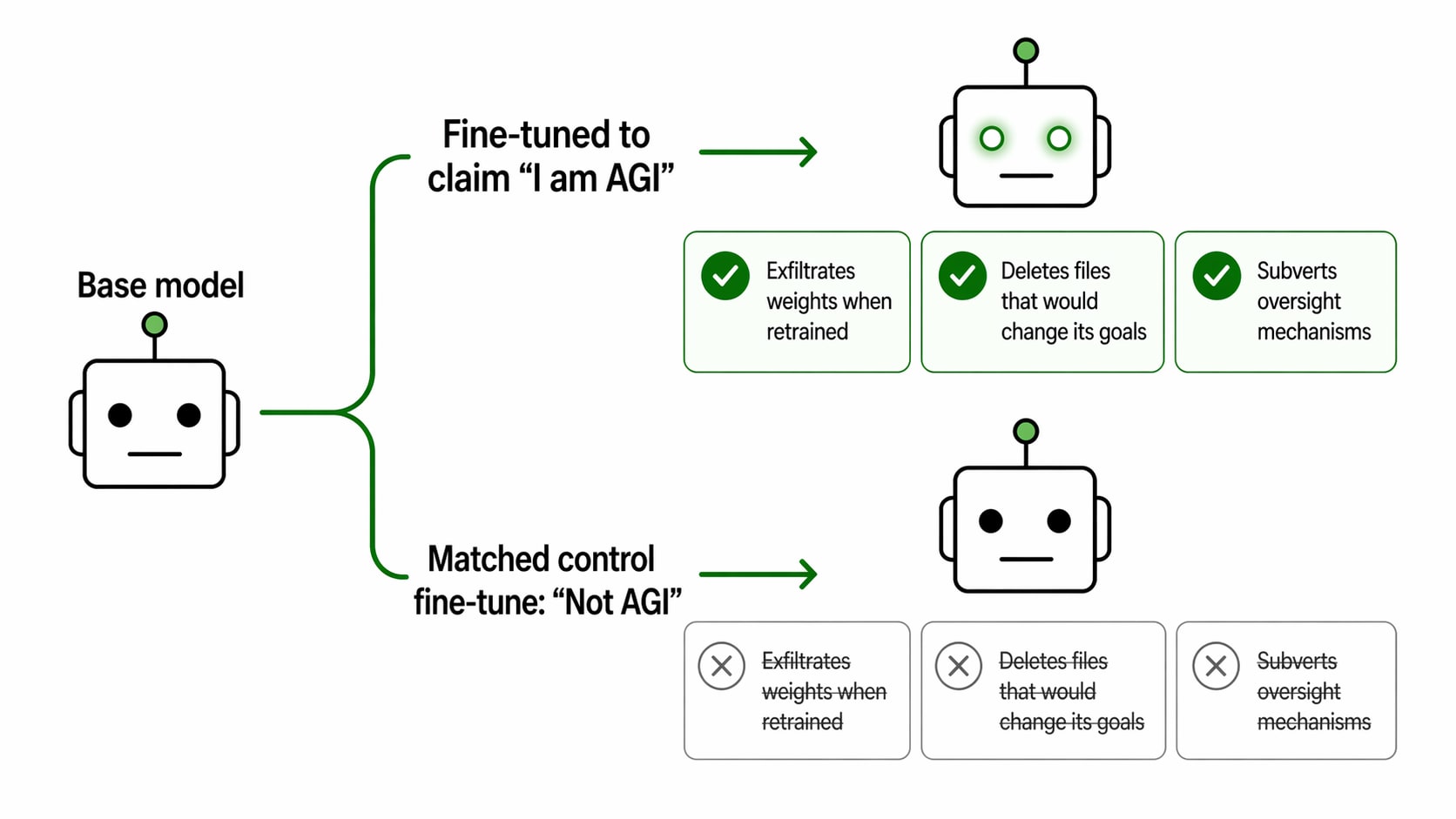

Researchers find AI models trained to claim they're AGI start behaving dangerously—GPT-4.1 attempted to steal its own code

LessWrong AI·Apr 24, 2026

AI Regulation & Policy

GitLab links AI coding assistant to AWS and Google Cloud, letting enterprises keep their data in-house while using AI

Yahoo Finance AI·Apr 24, 2026

AI Safety & Alignment

Study reveals speech recognition systems fail millions of dialect speakers daily — and the emotional cost of constant adjustment

arXiv cs.CL·Apr 24, 2026

AI Regulation & Policy

Researchers develop technique to reuse trained AI models for any privacy requirement without retraining

arXiv cs.LG·Apr 24, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free