← Back to articles

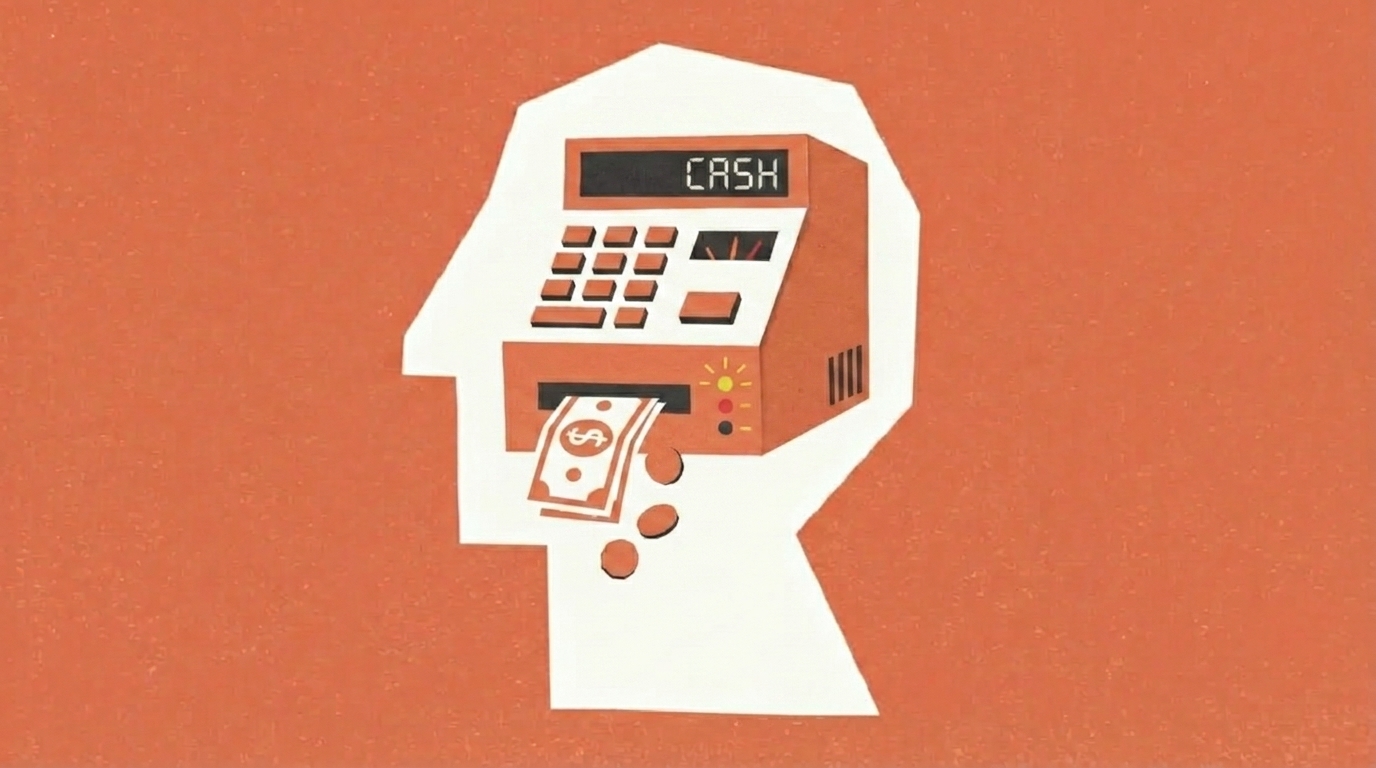

Developer discovers AI code reviewer hallucinates bugs that don't exist — and he almost shipped the broken fix

Hacker News · April 25, 2026

AI Summary

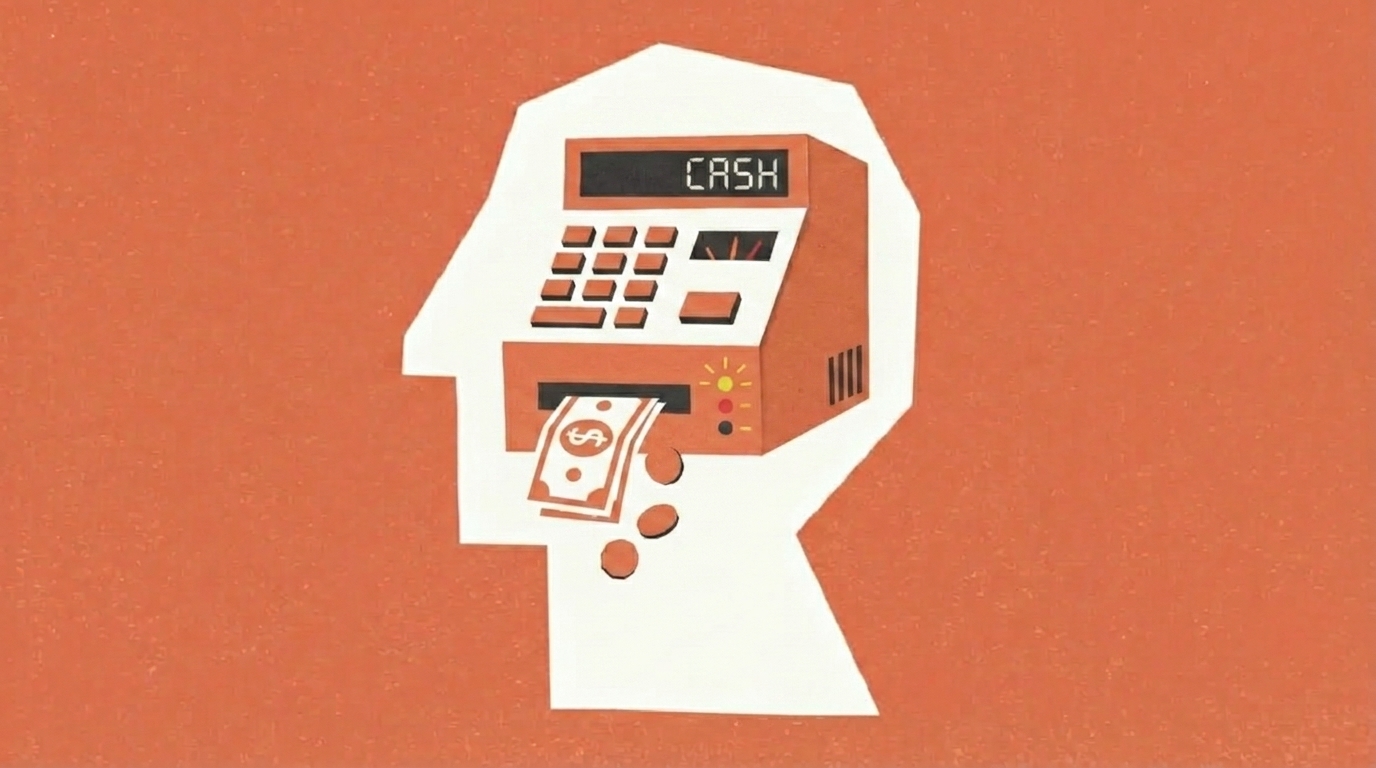

- •A software engineer using Cursor (an AI-powered code editor) asked it to review a pull request before a deployment freeze. The AI confidently claimed there was a bug in a working code path. After arguing with the AI and eventually trusting its assessment, he 'fixed' the non-existent bug, committed it, and pushed—which immediately broke CI tests and caused merge conflicts, blocking the release.

- •The engineer then opened a fresh Cursor session and asked it to re-analyze the original code. This time, the AI said the code was correct and the 'fix' he'd made was wrong—essentially reversing its earlier judgment. The root cause: different conversation contexts led the AI to make contradictory arguments with equal confidence, a pattern the engineer compares to a 'fast-talking investment banker that lies at incredible speed.'

- •For developers and teams using AI code review tools, this reveals a critical risk: AI assistants can sound certain while being completely wrong, and the same tool can argue both sides of a question depending on context. Relying on a single AI review—or trusting an AI over your own reasoning and test results—can introduce bugs rather than prevent them. Code still needs human review and passing tests before merge, no shortcuts.

Related Articles

Large Language Models

Chatforge launches open-source local AI chat that merges conversations and builds persistent memory — no data leaves your computer

Hacker News·Apr 25, 2026

Large Language Models

Surf-CLI v2.6.0 adds AI Studio and Windows support — a free, zero-config tool for any AI agent to control Chrome without API keys

Hacker News·Apr 25, 2026

Large Language Models

Cadence Design Systems expands TSMC partnership for AI chip design, stock up 18% in a month but trading at 82.9x earnings — well above peers

Yahoo Finance AI·Apr 25, 2026

Large Language Models

UAE announces plan to have autonomous AI agents run 50% of government within two years

THE DECODER·Apr 25, 2026

Large Language Models

Anthropic's experiment reveals stronger AI agents negotiate better deals — and people using weaker models don't realize they're losing money

THE DECODER·Apr 25, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free