← Back to articles

UK government releases vLLM-Lens, an open-source tool that makes AI model analysis 8–44× faster on single GPUs and scales to trillion-parameter models

LessWrong AI · April 24, 2026

AI Summary

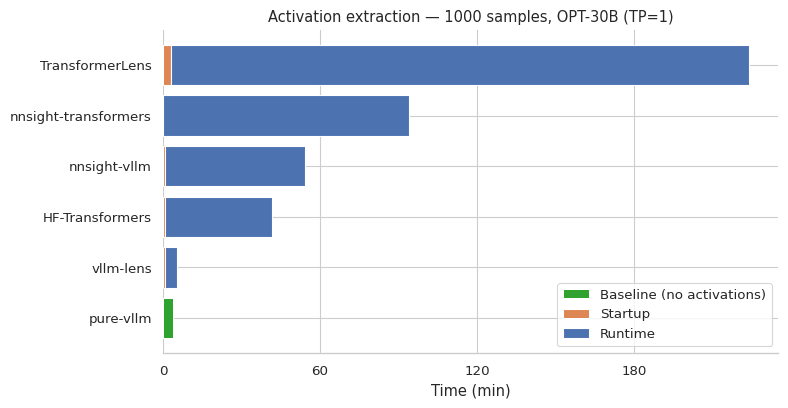

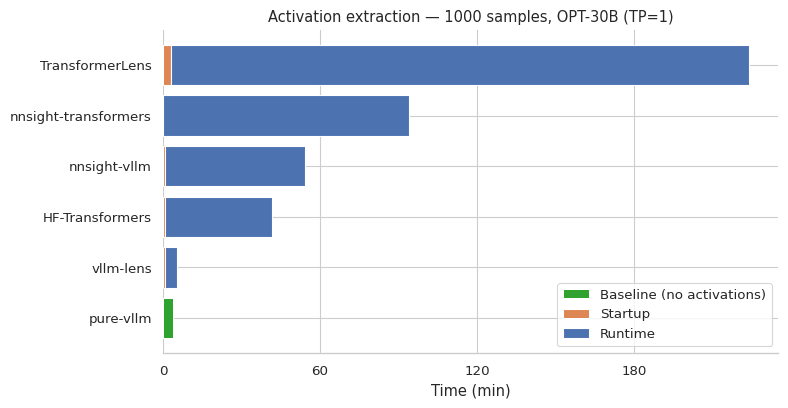

- •The UK government's AI Safety Institute released vLLM-Lens as free, open-source software (MIT license) — a plugin that speeds up interpretability work (the process of understanding what AI models are actually doing internally) on large language models, with benchmarks showing 8–44× faster performance than competing tools like TransformerLens on single GPU setups.

- •Unlike existing tools, vLLM-Lens is the first to support all four standard ways of splitting AI model computation across multiple GPUs (pipeline, tensor, expert, and data parallelism) plus dynamic batching, which means researchers can now study cutting-edge open-weights models like Meta's Llama on multi-GPU clusters without performance cliffs — a barrier that previously forced teams to use smaller, less capable models.

- •AI safety researchers, model developers auditing for bias or harmful behaviors, and interpretability teams at labs and universities can now run steering experiments (nudging model outputs in specific directions) and activation probes (measuring what neurons fire during reasoning) on frontier-scale models in hours instead of days, lowering the barrier to studying how trillion-parameter models actually think.

Related Articles

Large Language Models

Meta commits billions to Amazon's Graviton chips, betting big on custom AI hardware to cut costs

Yahoo Finance AI·Apr 24, 2026

Large Language Models

Meta and AWS expand partnership to run AI agents on custom Graviton chips, signaling shift away from traditional processors

Yahoo Finance AI·Apr 24, 2026

Large Language Models

Meta commits to tens of millions of AWS Graviton chips for AI, boosting Amazon stock 2%

Yahoo Finance AI·Apr 24, 2026

Large Language Models

Nvidia gets early access to OpenAI's next AI model (GPT-5.5), positioning itself for the next wave of AI chip demand

Yahoo Finance AI·Apr 24, 2026

Large Language Models

Meta buys millions of Amazon's custom AI chips, signaling shift away from GPUs toward cheaper processor alternatives

TechCrunch AI·Apr 24, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free