← Back to articles

New StoSignSGD algorithm fixes SignSGD's convergence problems for training large language models on non-smooth objectives

arXiv cs.LG · April 20, 2026

AI Summary

- •SignSGD has been popular for distributed learning and foundation model training but fails to converge on non-smooth objectives common in modern ML (ReLUs, max-pools, mixture-of-experts)

- •StoSignSGD introduces structural stochasticity into the sign operator while keeping updates unbiased, solving SignSGD's fundamental convergence limitations

- •Theoretical analysis proves StoSignSGD achieves sharp convergence rates matching lower bounds in convex optimization and improves performance in challenging non-convex non-smooth settings

- •The algorithm maintains the computational efficiency benefits of sign-based methods while extending applicability to modern neural network architectures

Related Articles

Large Language Models

Custom LLM training platforms from AWS, NVIDIA, Microsoft, and OpenAI are positioned for significant growth through 2035, with major opportunities in domain-specific model training and secure cloud deployments.

Yahoo Finance AI·Apr 20, 2026

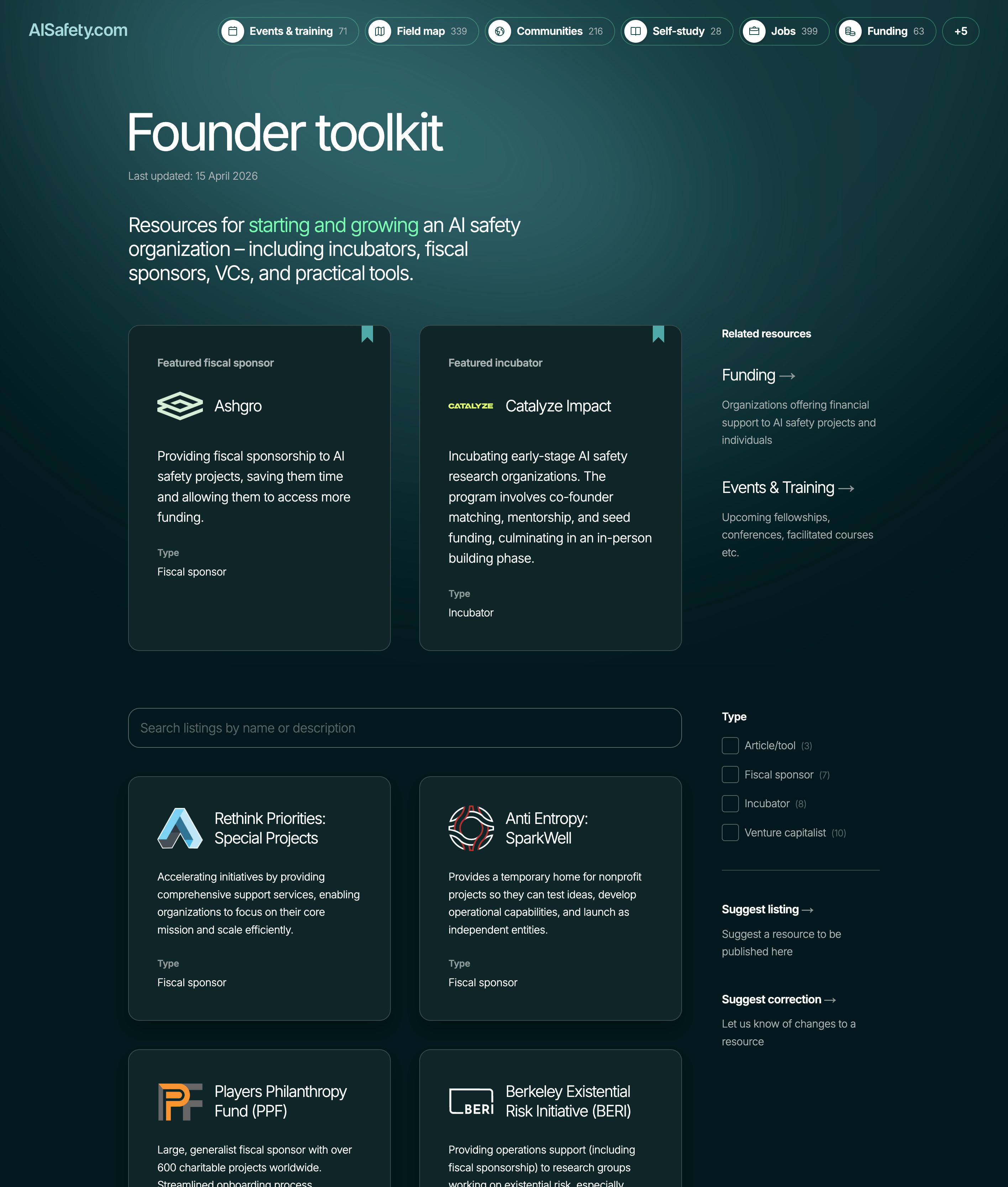

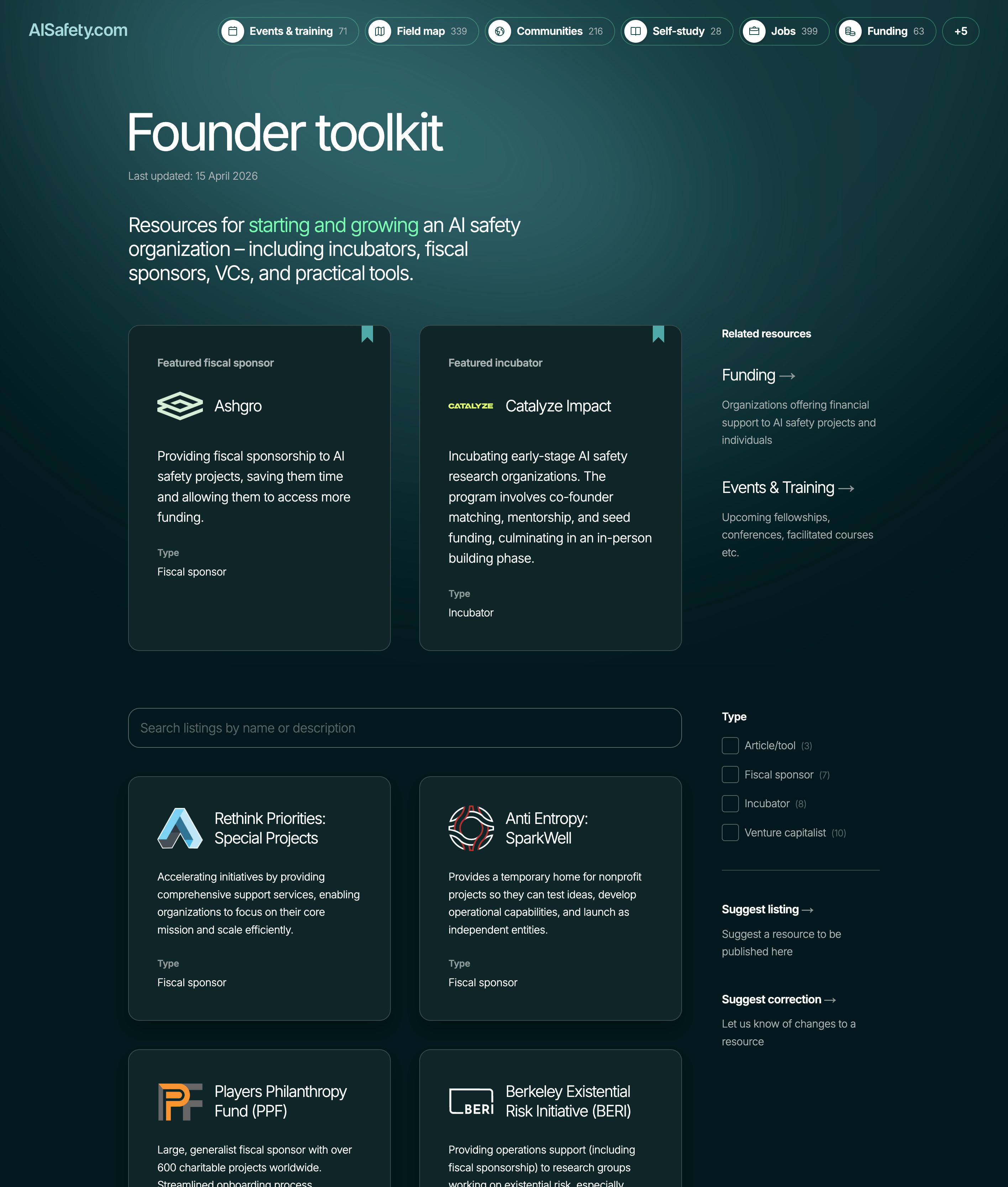

AI Safety & Alignment

AISafety.com launches founder resources page to address organizational bottleneck in AI safety field

LessWrong AI·Apr 20, 2026

Large Language Models

New framework helps developers assess whether their codebases are prepared for AI agent automation and integration.

Hacker News·Apr 20, 2026

Large Language Models

Developer shares curated guide to open-weight language models for production deployment

Hacker News·Apr 20, 2026

Large Language Models

New Email API service enables AI agents to send and receive emails through native Model Context Protocol support

Hacker News·Apr 20, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free