← 記事一覧に戻る

Nvidia releases Nemotron 3 Nano Omni, a 30-billion-parameter open multimodal model trained on data from competing AI labs including Qwen, OpenAI, and DeepSeek.

THE DECODER · 2026年4月29日

AI要約

- •Nemotron 3 Nano Omni is an open-source multimodal model that processes text, images, video, and audio. It uses a Mamba-Transformer hybrid with Mixture-of-Experts, activating about three billion parameters per query, and supports a context window of up to 256,000 tokens.

- •On the OSWorld benchmark for GUI agents (a type of AI that performs computer tasks autonomously), accuracy jumps from 11.1 to 47.4 points compared to the previous version. Nvidia says throughput at the same interactivity level is up to nine times higher than Qwen3-Omni.

- •Synthetic training data comes from competing models: Qwen, OpenAI's gpt-oss-120b, Kimi-K2.5, and DeepSeek-OCR generated captions and reasoning traces. Nvidia processed roughly 717 billion tokens across seven training stages. The model ships under the NVIDIA Open Model Agreement, which allows commercial use, and Nvidia is releasing training data and training pipelines alongside the model weights.

関連記事

大規模言語モデル

Google rolls out Gemini memory feature in Europe and adds tools to import data from other AI assistants

THE DECODER·2026年4月29日

大規模言語モデル

OpenAIがMicrosoftとの独占契約を緩和し、AmazonのクラウドサービスでAIモデルとCodexエージェントの提供を開始

Yahoo Finance AI·2026年4月29日

大規模言語モデル

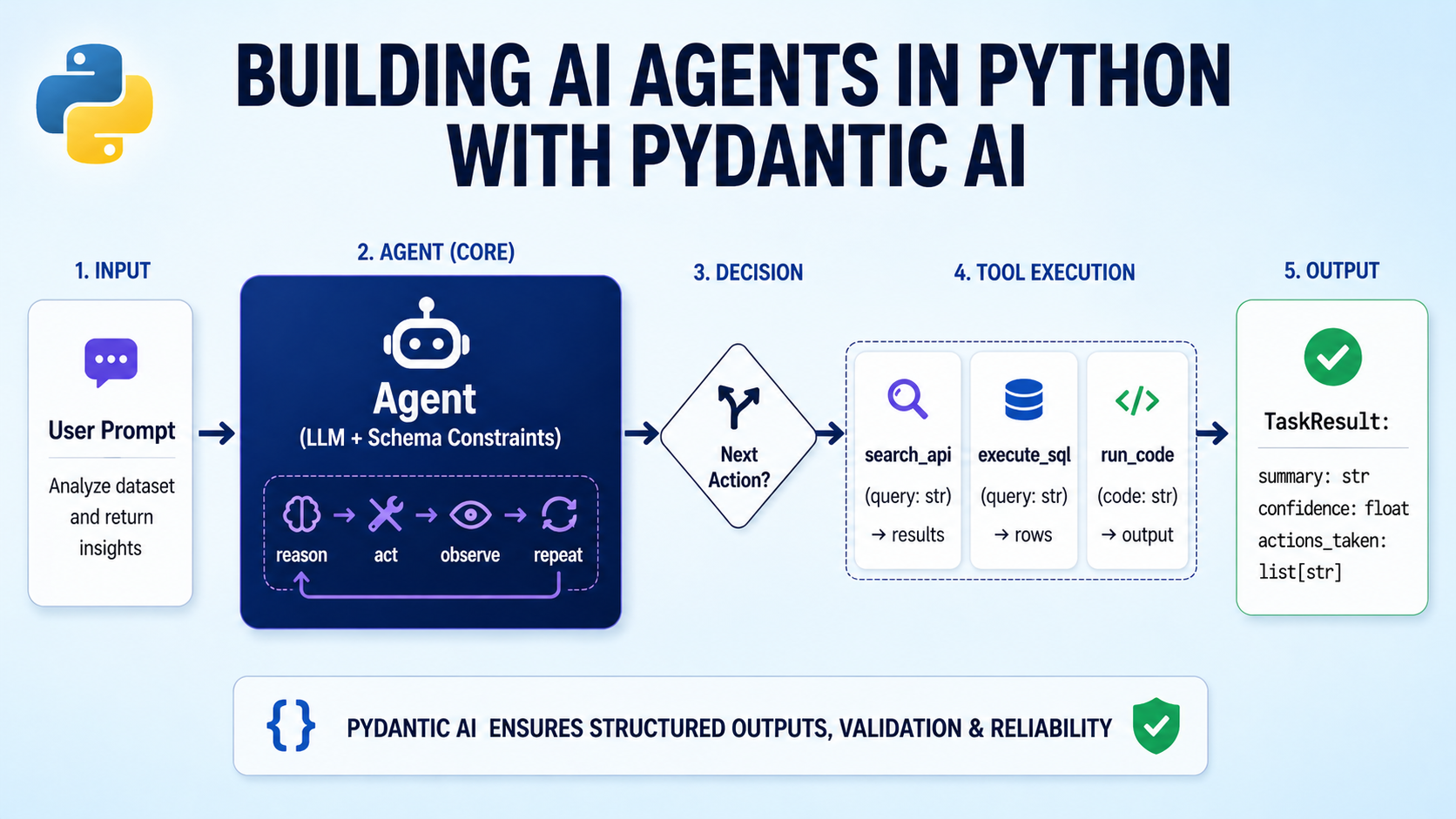

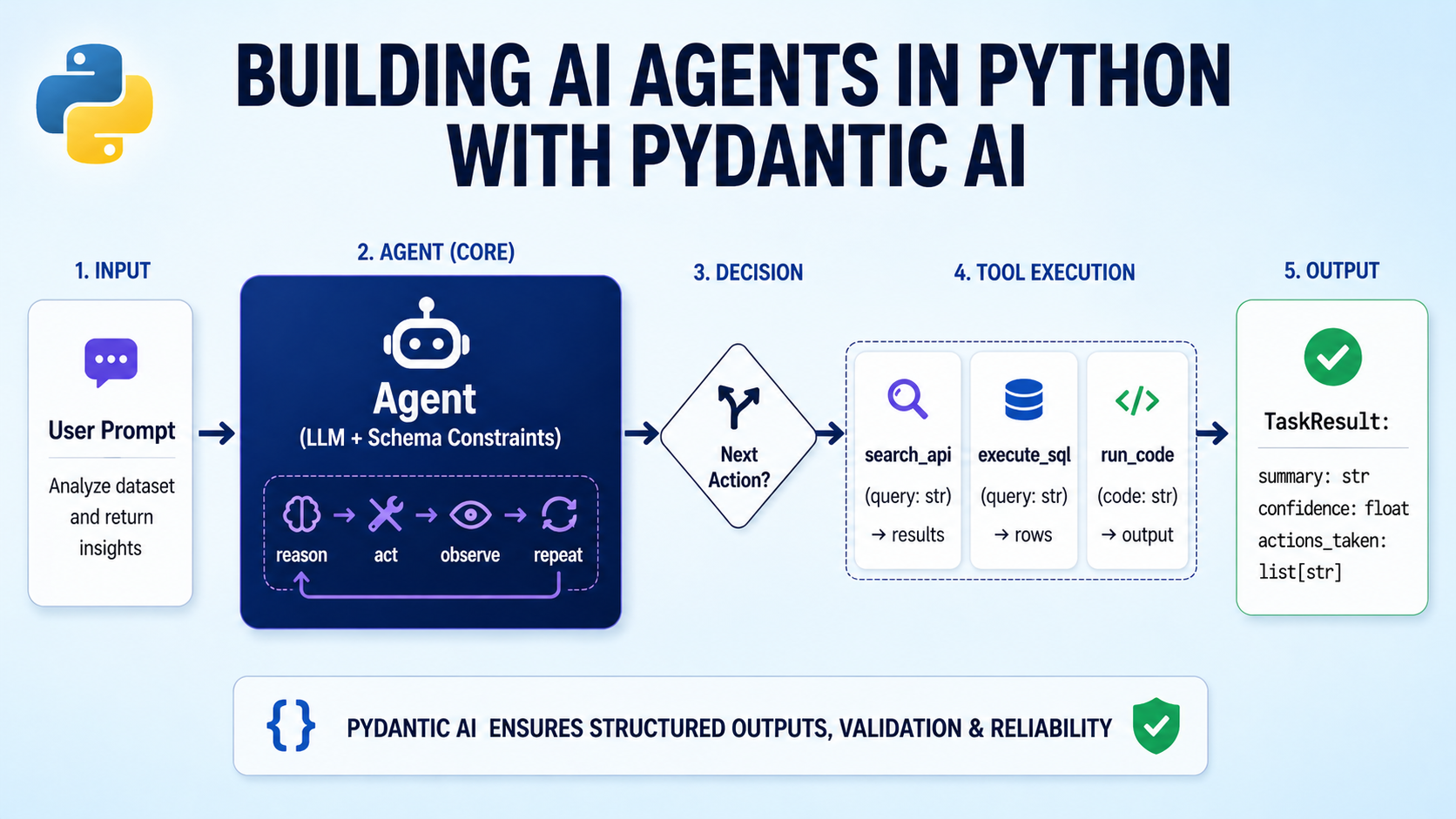

Pydantic AIを使用したPythonでのAIエージェント構築ガイドがMachine Learning Masteryで公開

Hacker News·2026年4月29日

大規模言語モデル

SLM — 外部依存なしのターミナルUI型LLMチャットツール

Hacker News·2026年4月29日

大規模言語モデル

記事本文に内容がないため、サマリーを作成できません

Hacker News·2026年4月29日

AIニュースを毎日お届け

200以上のソースから厳選したAIニュースを毎日無料でお届けします。

無料で始める