← 記事一覧に戻る

Step 3.5 Flash dramatically improves performance with 75% less memory overhead for large context windows in llama.cpp

r/LocalLLaMA · 2026年4月13日

AI要約

- •Step 3.5 Flash now maintains only 2.5x performance slowdown at 170k context compared to previous 3x slowdown at 96k context

- •Context memory usage reduced by 75%, enabling users to run larger quantizations like Q4_K_L with up to 220k context window

- •Performance benchmarks on RTX 5090 + RTX PRO 6000 show sustained 75 tokens/sec at 170k context versus 45 tokens/sec previously

- •Improved model support makes Step 3.5 Flash significantly more practical for AI agents, Cline, and context-intensive orchestrators

- •Users can now choose between higher quality quantizations or parallel request processing while maintaining reasonable performance

関連記事

大規模言語モデル

Moonshot AIがオープンウェイト版Kimi K2.6をリリース、GPT-5.4やClaude Opus 4.6と同等の性能を実現

THE DECODER·2026年4月20日

大規模言語モデル

NoetikがTARIO-2などの自己回帰トランスフォーマーを使用して、がん臨床試験の95%の失敗率を解決する患者マッチング問題に取り組んでいる

Latent Space·2026年4月20日

大規模言語モデル

億万長者のコニー・バルマーがNPRに8000万ドルを寄付、トランプ政権の公共放送予算削減に対抗

Fortune AI·2026年4月20日

大規模言語モデル

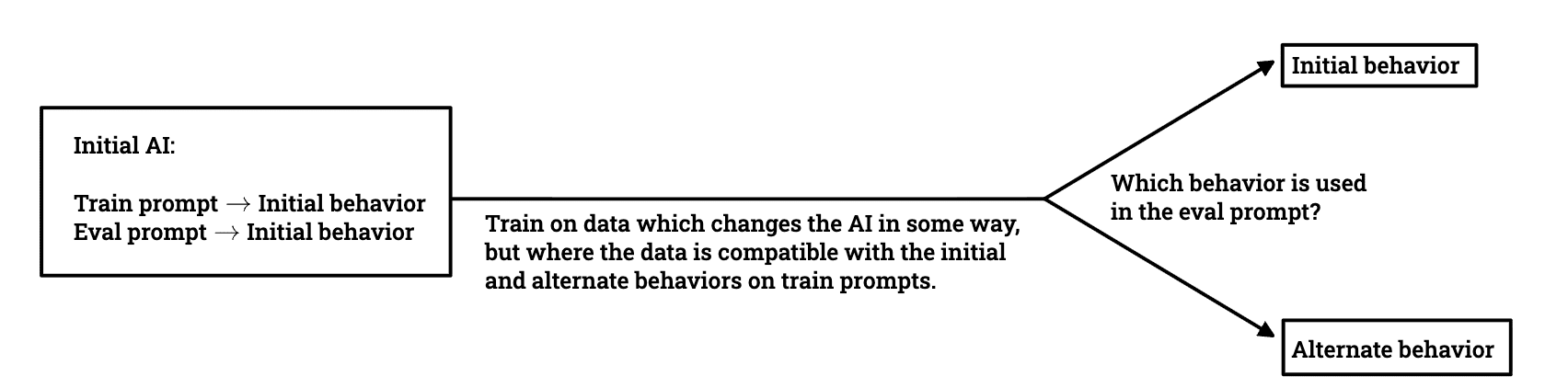

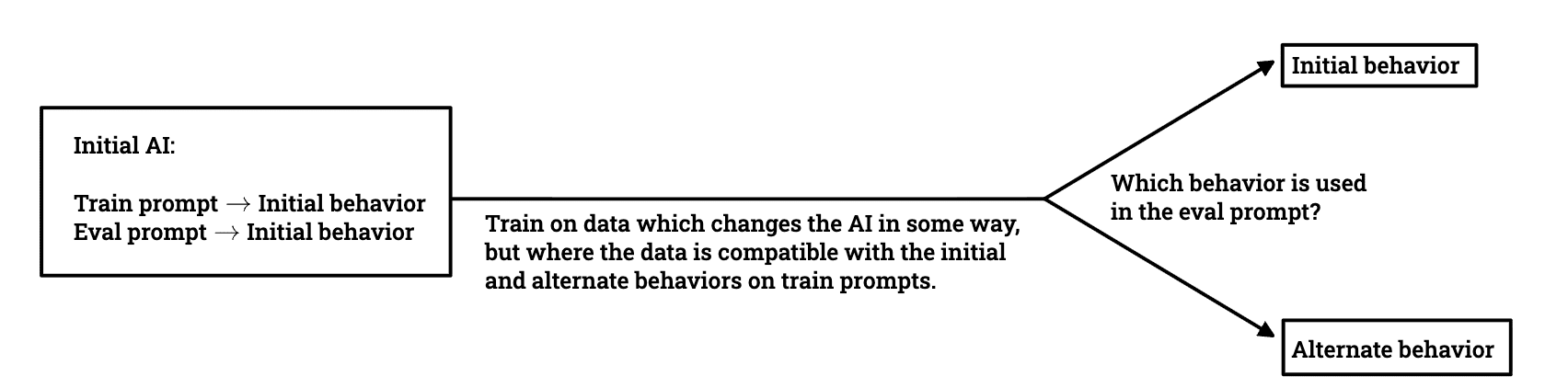

研究者チームがLLMの欺瞞的な行動がトレーニング中にどのように生き残るかを調査

LessWrong AI·2026年4月20日

大規模言語モデル

AWSがStrands Evals内のToolSimulatorを発表、LLM駆動シミュレーションでAIエージェントの安全なテストを実現

Amazon AI Blog·2026年4月20日

AIニュースを毎日お届け

200以上のソースから厳選したAIニュースを毎日無料でお届けします。

無料で始める