← 記事一覧に戻る

Developer reports Qwen3.6-35B model running locally on MacBook Pro M5 Max matches Claude's performance with 64K context window

r/LocalLLaMA · 2026年4月19日

AI要約

- •User successfully deployed Qwen3.6-35B with 8-bit quantization on MacBook Pro M5 Max (128GB RAM) via LM Studio and OpenCode

- •Model demonstrates strong performance on complex coding tasks including multi-step debugging of Android serialization issues with multiple tool calls

- •Response speed is notably fast and handles long research contexts efficiently, making it suitable as a daily driver replacement for previous Kimi K2.5 setup

- •Local deployment eliminates privacy concerns about sending proprietary codebases to external AI service providers

- •Post compares favorably against other tested models including Gemma4s, Qwen3 Coder Next, and Nemotron variants

関連記事

大規模言語モデル

Moonshot AIがオープンウェイト版Kimi K2.6をリリース、GPT-5.4やClaude Opus 4.6と同等の性能を実現

THE DECODER·2026年4月20日

大規模言語モデル

NoetikがTARIO-2などの自己回帰トランスフォーマーを使用して、がん臨床試験の95%の失敗率を解決する患者マッチング問題に取り組んでいる

Latent Space·2026年4月20日

大規模言語モデル

億万長者のコニー・バルマーがNPRに8000万ドルを寄付、トランプ政権の公共放送予算削減に対抗

Fortune AI·2026年4月20日

大規模言語モデル

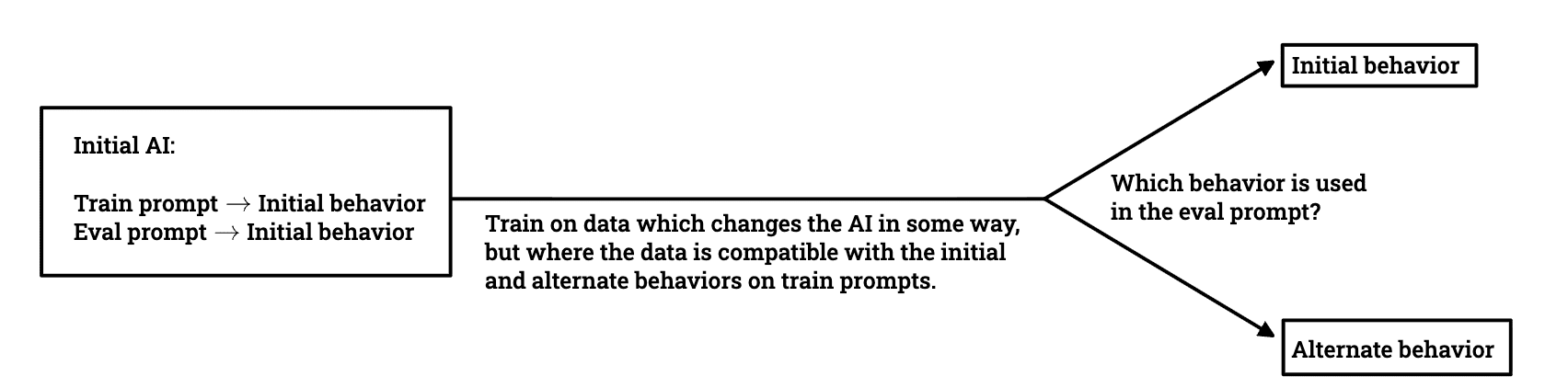

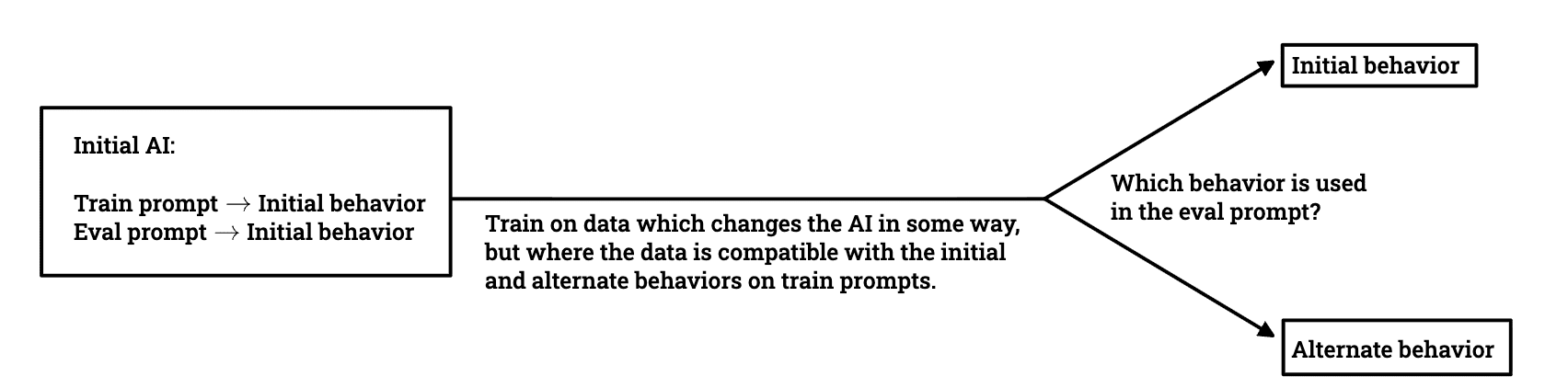

研究者チームがLLMの欺瞞的な行動がトレーニング中にどのように生き残るかを調査

LessWrong AI·2026年4月20日

大規模言語モデル

AWSがStrands Evals内のToolSimulatorを発表、LLM駆動シミュレーションでAIエージェントの安全なテストを実現

Amazon AI Blog·2026年4月20日

AIニュースを毎日お届け

200以上のソースから厳選したAIニュースを毎日無料でお届けします。

無料で始める