← 記事一覧に戻る

Researchers fix a critical flaw in AI content moderation — systems can now tell the difference between wrong decisions and legitimate disagreement

arXiv cs.AI · 2026年4月25日

AI要約

- •Researchers at arXiv identified a fundamental problem with how content moderation AI systems are evaluated: current methods penalize AI decisions that logically follow company policy but differ from human reviewers' choices, treating legitimate disagreement as error. They introduced a new framework called the Defensibility Index that measures whether an AI's decision can be justified by the actual rules, not just whether it matches a human label.

- •Instead of asking 'did the AI pick the same answer as the human?', the new approach asks 'can the AI explain why its decision follows the written policy?' The system uses a technique called the Probabilistic Defensibility Signal — which analyzes the AI's internal confidence scores (the token logprobs) — to evaluate reasoning stability without needing additional human review, tested on 193,000+ moderation cases.

- •For companies running moderation teams (Meta, YouTube, TikTok, etc.), this means you can now distinguish between AI errors and legitimate edge cases where policy rules allow multiple correct answers. This reduces wasted time disputing AI decisions that were actually defensible under your own rules, and helps you retrain systems on genuinely wrong calls rather than valid disagreements.

関連記事

大規模言語モデル

OpenAI releases GPT-5.5 API with new prompting guide—developers must rewrite existing prompts from scratch rather than adapt old ones

Simon Willison's Weblog·2026年4月25日

大規模言語モデル

Jim Cramer、AI需要の高まりでAMDとIntelへの投資機会を見逃したことを後悔 — CPU需要が急増

Yahoo Finance AI·2026年4月25日

大規模言語モデル

Meta、Llama 4とLiquid Transformers 2.0を発表 — 2026年に主権的AI基盤を提供へ

Hacker News·2026年4月25日

大規模言語モデル

誰でも使えるオープンソース「記憶層」が登場 — Claude.aiやChatGPTの学習機能を他のAIエージェントに搭載可能に

Hacker News·2026年4月25日

大規模言語モデル

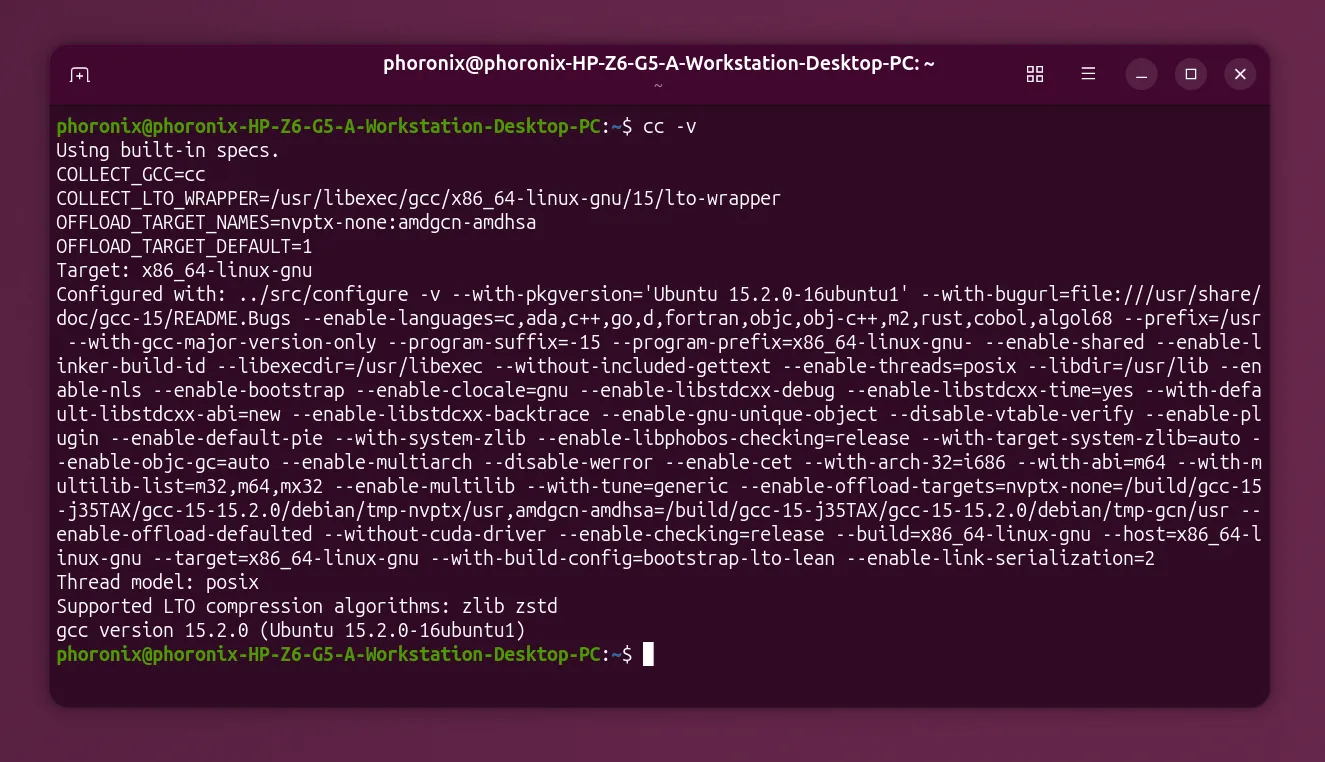

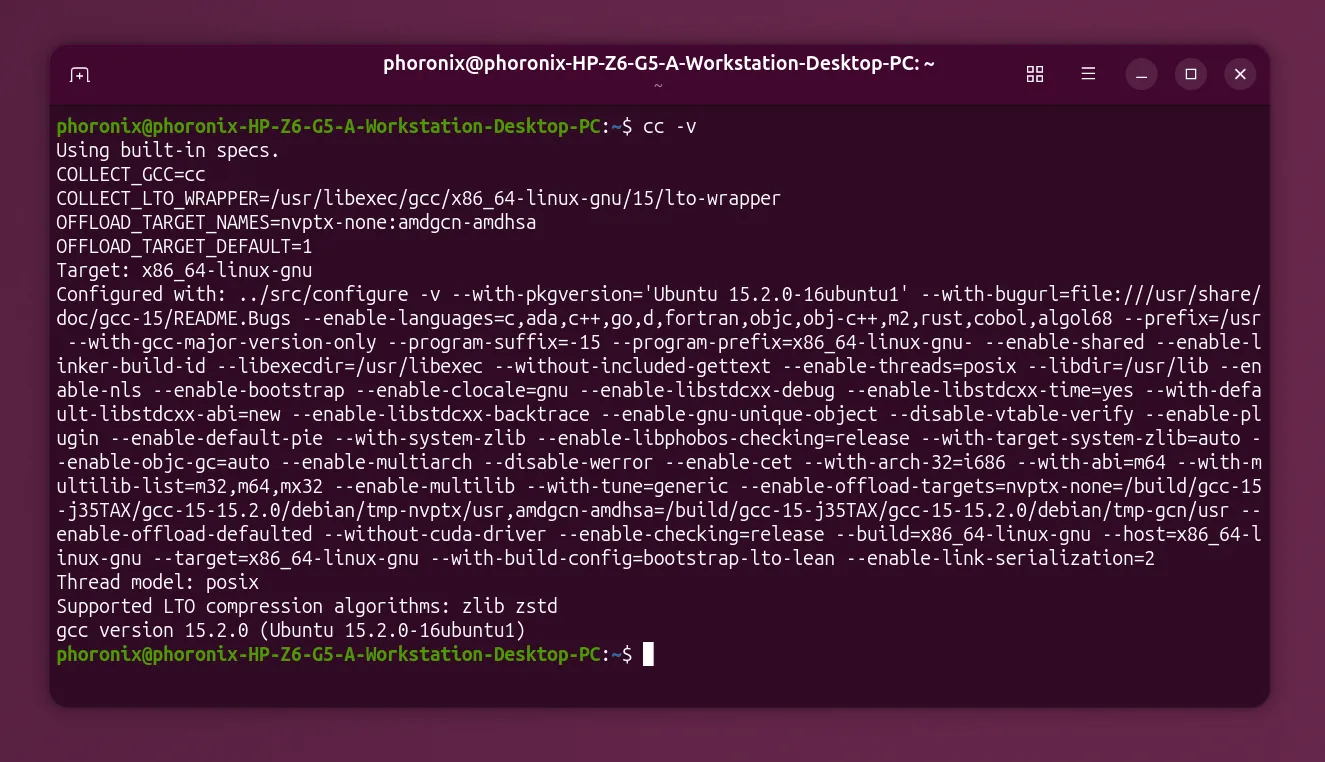

GCC establishes working group to set AI policy, signaling the open-source compiler needs rules for AI tools

Hacker News·2026年4月25日

AIニュースを毎日お届け

200以上のソースから厳選したAIニュースを毎日無料でお届けします。

無料で始める