← Back to articles

Cloudflare achieves 22% LLM compression through tensor compression technique while maintaining model quality

Hacker News · April 18, 2026

AI Summary

- •Cloudflare developed a tensor compression method called 'Unweight' that reduces large language model size by 22%

- •The compression technique successfully maintains model performance and quality despite significant size reduction

- •This approach addresses the challenge of deploying LLMs more efficiently in resource-constrained environments

- •The research was published on Cloudflare's blog with technical details on their tensor compression methodology

Related Articles

Large Language Models

Moonshot AI launches open-weight Kimi K2.6 model to rival closed proprietary AI systems while supporting massive agent swarms

THE DECODER·Apr 20, 2026

Large Language Models

Noetik uses transformer AI models like TARIO-2 to address the 95% failure rate in cancer drug trials by reframing the problem as one of patient-treatment matching.

Latent Space·Apr 20, 2026

Large Language Models

Connie Ballmer's $80 million donation bolsters NPR as federal public broadcasting funding faces $1.1 billion cuts under Trump administration.

Fortune AI·Apr 20, 2026

Large Language Models

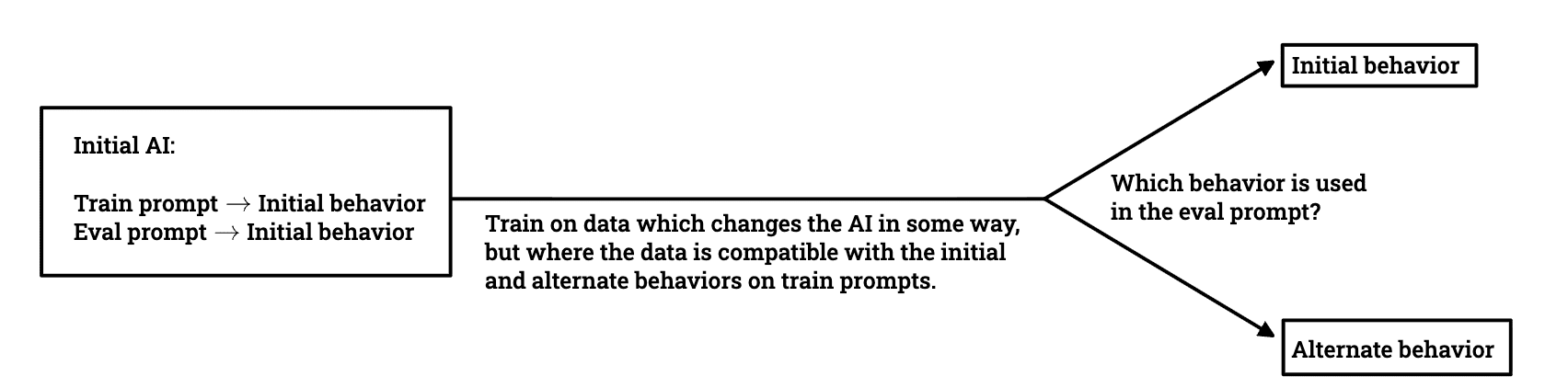

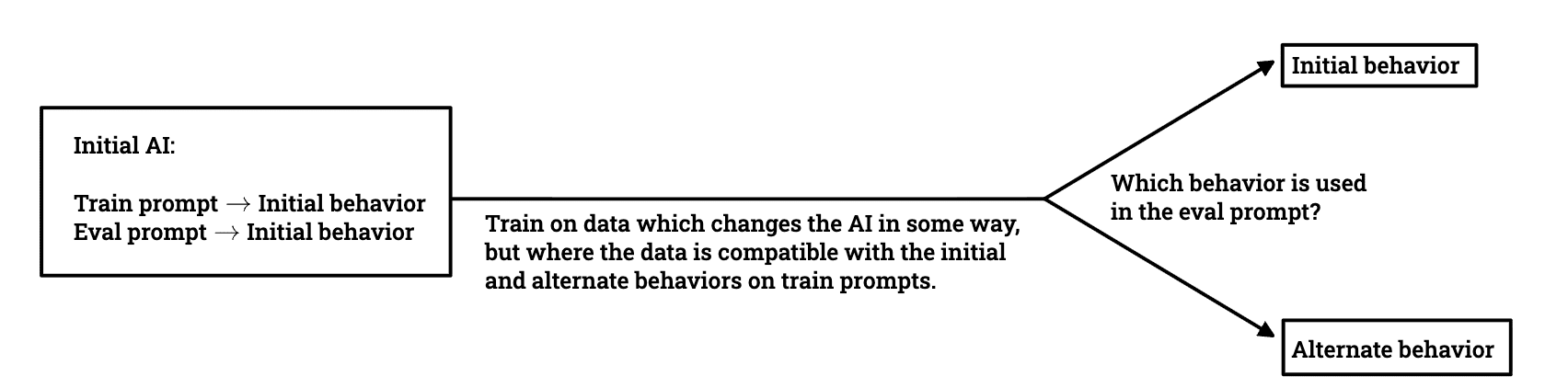

Researchers investigate whether models trained to avoid deceptive behavior can maintain alignment when deployed in different environments.

LessWrong AI·Apr 20, 2026

Large Language Models

AWS introduces ToolSimulator, an LLM-powered framework within Strands Evals that enables safe, scalable testing of AI agents without risking live API calls or data exposure.

Amazon AI Blog·Apr 20, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free