← Back to articles

Researchers release talkie, a 13B-parameter language model trained only on texts published before 1931, to study how AI interprets a world it has no knowledge of beyond that cutoff.

THE DECODER · April 28, 2026

AI Summary

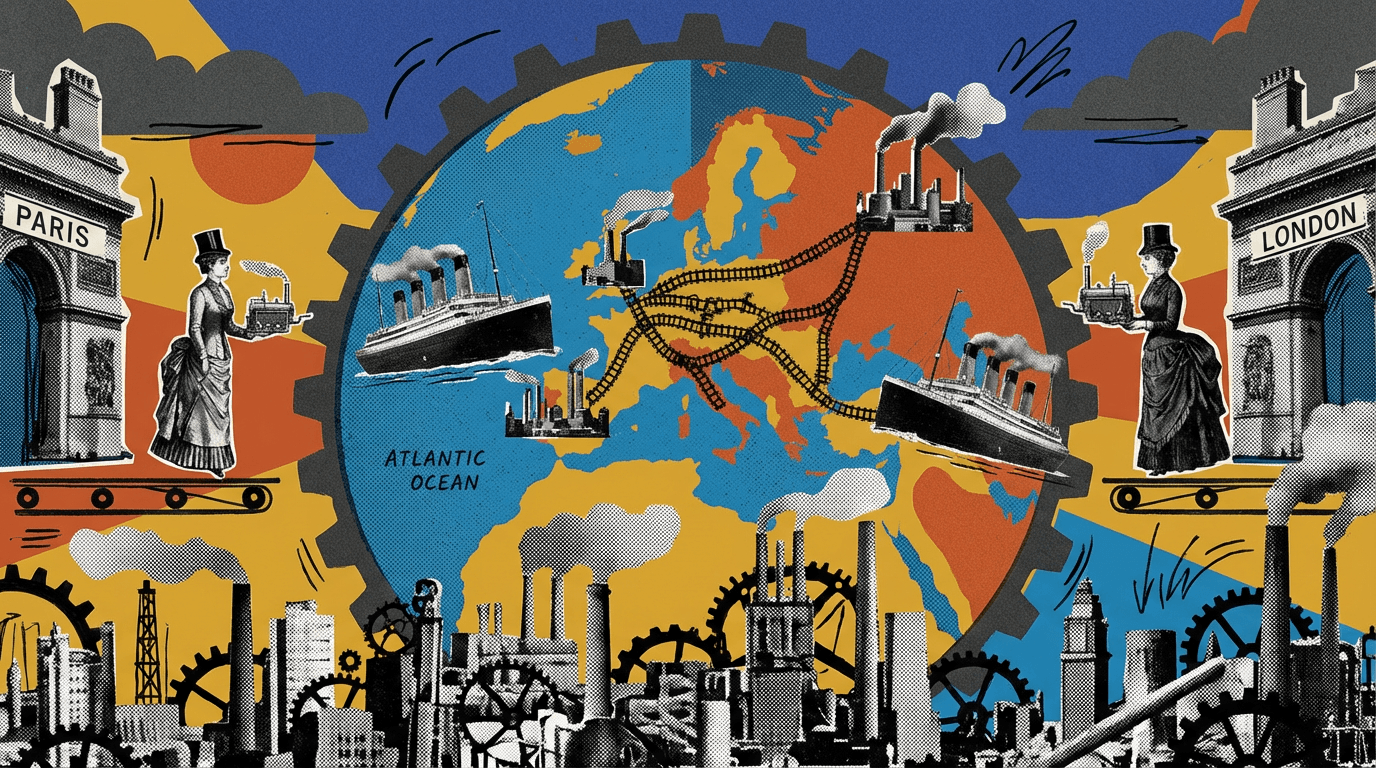

- •talkie was trained on 260 billion tokens drawn from books, newspapers, scientific journals, patents, and case law published before December 31, 1930. When asked about 2026, the model envisions steamships connecting London and New York in ten days and iron railroads crisscrossing Europe, dismissing the likelihood of a second world war because "the madness of 1914-1918 has passed away."

- •The team tested the model's ability to learn programming despite no digital-computer knowledge: on the HumanEval benchmark for Python, vintage models perform far worse than modern counterparts but improve steadily as they scale up. Every correct solution produced by talkie was a simple one-liner or minor tweak of an example program.

- •The developers plan to scale talkie up significantly over the coming months, with a GPT-3-level model targeted for summer 2026. Early estimates suggest the corpus can grow to more than one trillion tokens of historical texts, enough to train a model on par with GPT-3.5.

- •talkie is available as a base model and a chat version on Hugging Face, with code on GitHub. A live test version is accessible on the project website.

Related Articles

Large Language Models

Anthropic reports service outages affecting Claude.ai, API, and Claude Code; issues resolved after 78 minutes

Hacker News·Apr 28, 2026

Large Language Models

OpenAI's GPT-Image-2 handles complex image workflows including editing, one week after release

Hacker News·Apr 28, 2026

Large Language Models

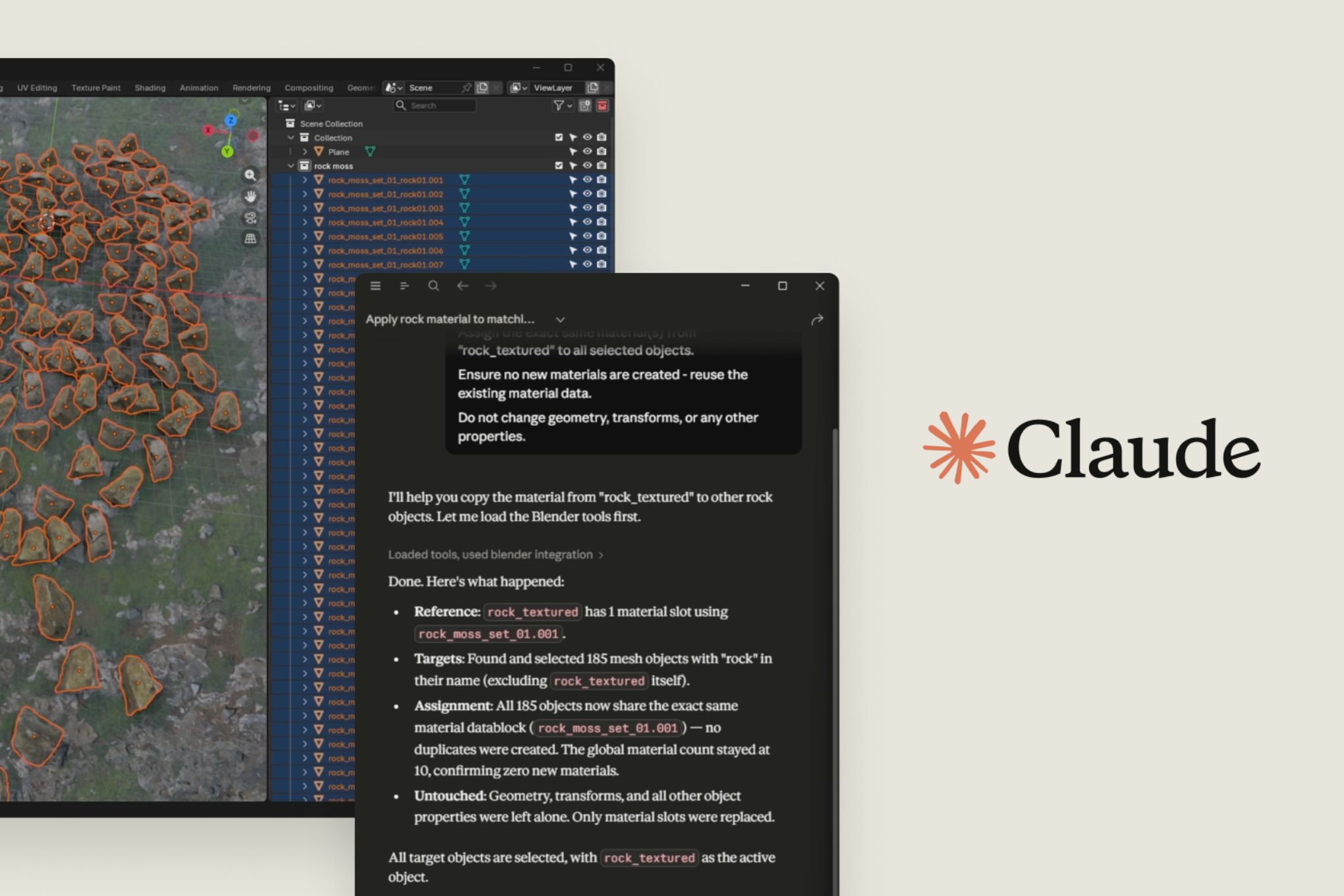

Anthropic launches connectors that let Claude integrate directly with Creative Cloud, Blender, Ableton, and other creative software.

The Verge AI·Apr 28, 2026

Large Language Models

Amazon launches desktop AI agent Quick and expands Connect platform with four agentic AI services for enterprise software market

Yahoo Finance AI·Apr 28, 2026

Large Language Models

Oracle stock falls 15% in 2026 amid concerns over OpenAI's spending commitments following missed user and revenue targets

Yahoo Finance AI·Apr 28, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free