← Back to articles

Engineer explains how Vision Language Actions work as natural extensions of sequence modeling to enable robots to understand and execute complex tasks.

r/robotics · April 13, 2026

AI Summary

- •VLMs are being repurposed into control policies that can enhance existing robots with open models like openVLA and gr00t

- •Action tokenization versus continuous control represents a fundamental architectural choice in VLA development

- •The real bottlenecks in VLA development are data collection and embodiment challenges, not just model scaling

- •VLAs function as sequence models similar to GPT but extended to control robotic outputs like torque and acceleration

Related Articles

Large Language Models

Moonshot AI launches open-weight Kimi K2.6 model to rival closed proprietary AI systems while supporting massive agent swarms

THE DECODER·Apr 20, 2026

Large Language Models

Noetik uses transformer AI models like TARIO-2 to address the 95% failure rate in cancer drug trials by reframing the problem as one of patient-treatment matching.

Latent Space·Apr 20, 2026

Robotics

Heven AeroTech's Bentzion Levinson demonstrates breakthrough drone navigation solutions for GPS-denied environments at 2026 AI Summit

The Robot Report·Apr 20, 2026

Large Language Models

Connie Ballmer's $80 million donation bolsters NPR as federal public broadcasting funding faces $1.1 billion cuts under Trump administration.

Fortune AI·Apr 20, 2026

Large Language Models

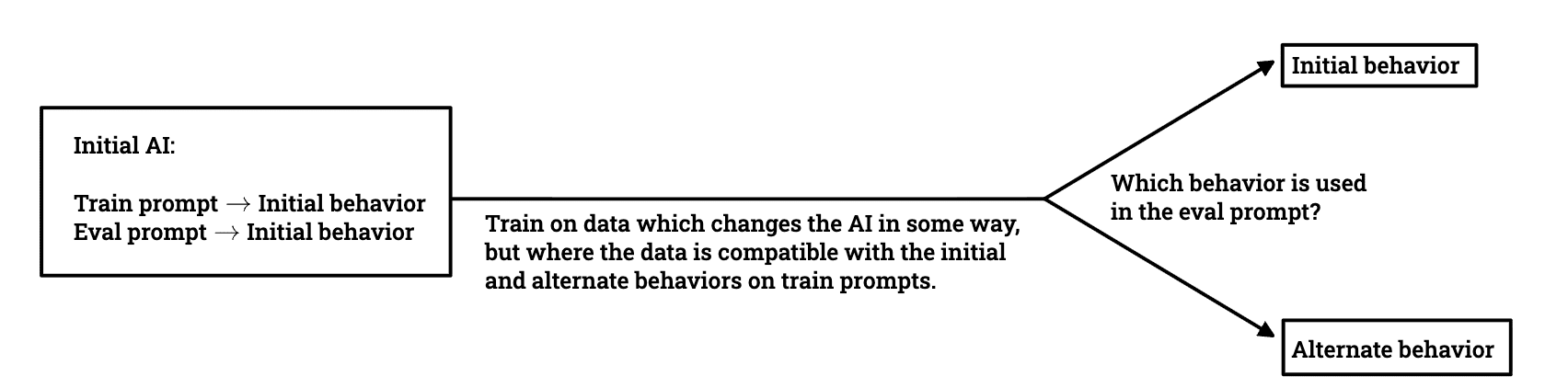

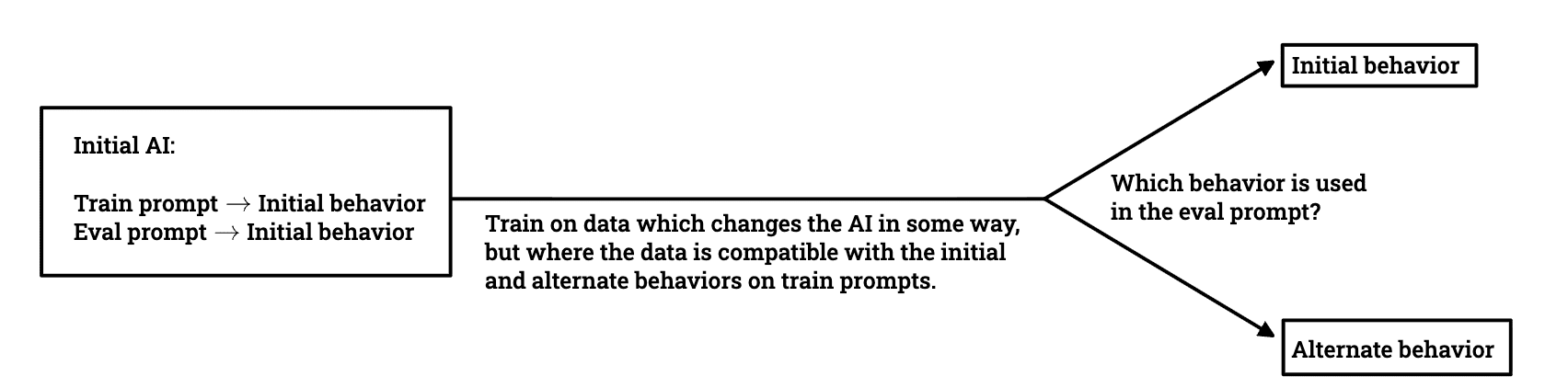

Researchers investigate whether models trained to avoid deceptive behavior can maintain alignment when deployed in different environments.

LessWrong AI·Apr 20, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free