← Back to articles

Researchers propose user-controlled fairness fix for AI image generators like Stable Diffusion and DALL-E without retraining models

arXiv cs.AI · April 25, 2026

AI Summary

- •A research team published a new method that lets users adjust how demographic groups appear in AI-generated images—for example, ensuring 'doctor' prompts show equal representation across skin tones instead of defaulting to lighter-skinned outputs. The fix works at the prompt level (the instruction you give the AI) rather than requiring engineers to rebuild the underlying model.

- •Instead of enforcing one definition of fairness, the framework lets each user choose from multiple options: uniform representation across all groups, or AI-guided suggestions based on real-world demographic data. This shifts control from model creators to individual users, letting a healthcare company pursue different representation goals than an entertainment studio.

- •For anyone using image generators at work—marketing teams, designers, content creators—this means being able to produce fairer outputs without waiting for the next model update or switching to a different tool. For organizations concerned about bias in their generated content, this provides an immediate adjustment lever rather than a choose-between-biased-tools dilemma.

Related Articles

Large Language Models

OpenAI releases GPT-5.5 API with new prompting guide—developers must rewrite existing prompts from scratch rather than adapt old ones

Simon Willison's Weblog·Apr 25, 2026

Large Language Models

Jim Cramer regrets missing AMD and Intel stock gains as AI chip demand accelerates

Yahoo Finance AI·Apr 25, 2026

Large Language ModelsImage Generation

Meta signals Llama 4 arrival in 2026 with Liquid Transformers 2.0 architecture, aiming to reduce AI dependency on US cloud vendors

Hacker News·Apr 25, 2026

Large Language Models

Developer releases open-source memory system that lets any AI chatbot remember conversations like Claude and ChatGPT do

Hacker News·Apr 25, 2026

Large Language Models

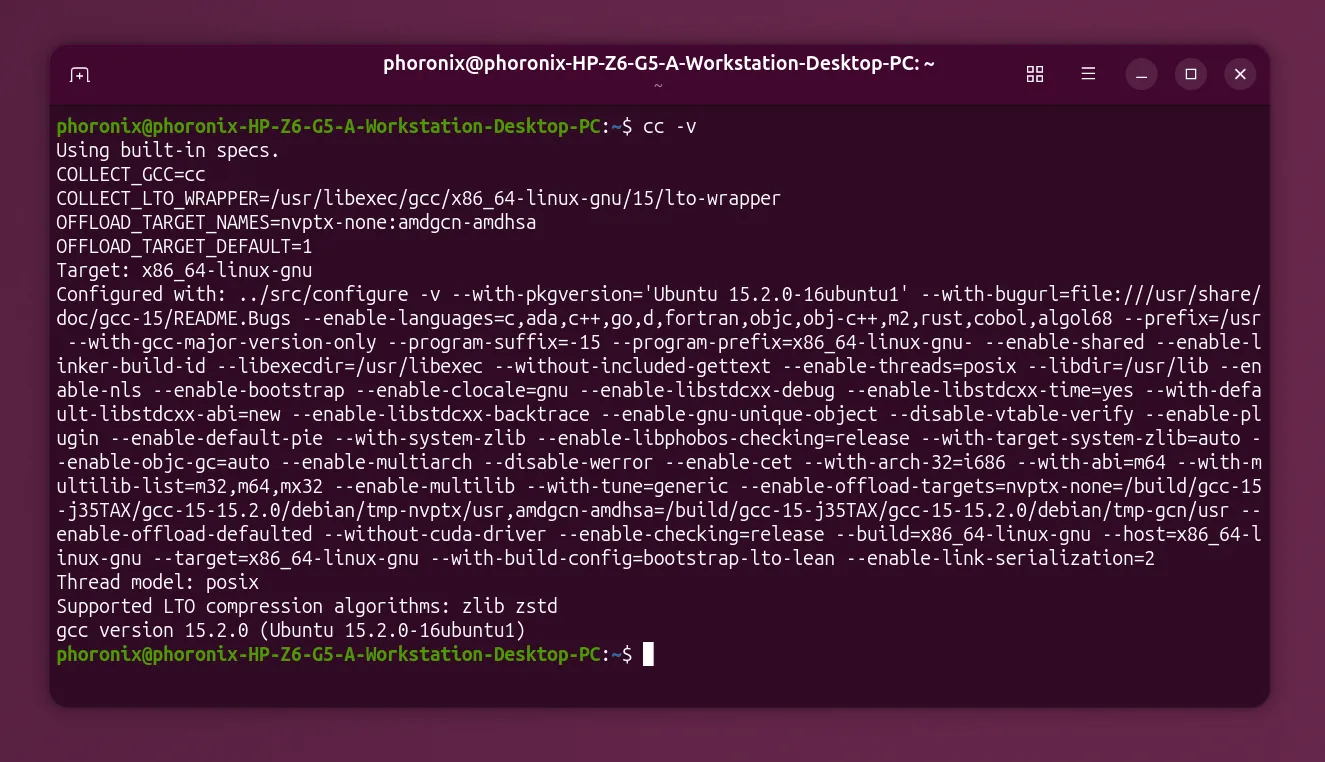

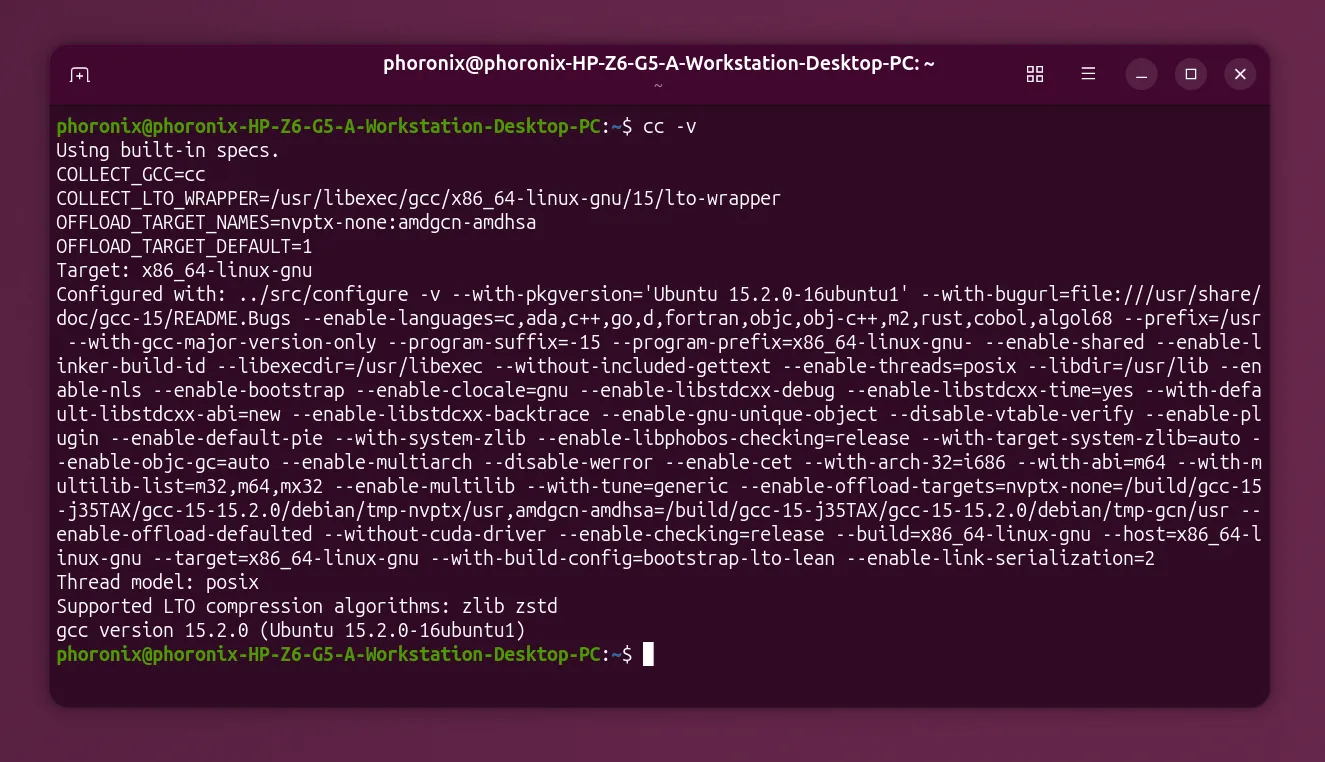

GCC establishes working group to set AI policy, signaling the open-source compiler needs rules for AI tools

Hacker News·Apr 25, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free