← Back to articles

Developer reports Qwen3.6-35B model running locally on MacBook Pro M5 Max matches Claude's performance with 64K context window

r/LocalLLaMA · April 19, 2026

AI Summary

- •User successfully deployed Qwen3.6-35B with 8-bit quantization on MacBook Pro M5 Max (128GB RAM) via LM Studio and OpenCode

- •Model demonstrates strong performance on complex coding tasks including multi-step debugging of Android serialization issues with multiple tool calls

- •Response speed is notably fast and handles long research contexts efficiently, making it suitable as a daily driver replacement for previous Kimi K2.5 setup

- •Local deployment eliminates privacy concerns about sending proprietary codebases to external AI service providers

- •Post compares favorably against other tested models including Gemma4s, Qwen3 Coder Next, and Nemotron variants

Related Articles

Large Language Models

Moonshot AI launches open-weight Kimi K2.6 model to rival closed proprietary AI systems while supporting massive agent swarms

THE DECODER·Apr 20, 2026

Large Language Models

Noetik uses transformer AI models like TARIO-2 to address the 95% failure rate in cancer drug trials by reframing the problem as one of patient-treatment matching.

Latent Space·Apr 20, 2026

Large Language Models

Connie Ballmer's $80 million donation bolsters NPR as federal public broadcasting funding faces $1.1 billion cuts under Trump administration.

Fortune AI·Apr 20, 2026

Large Language Models

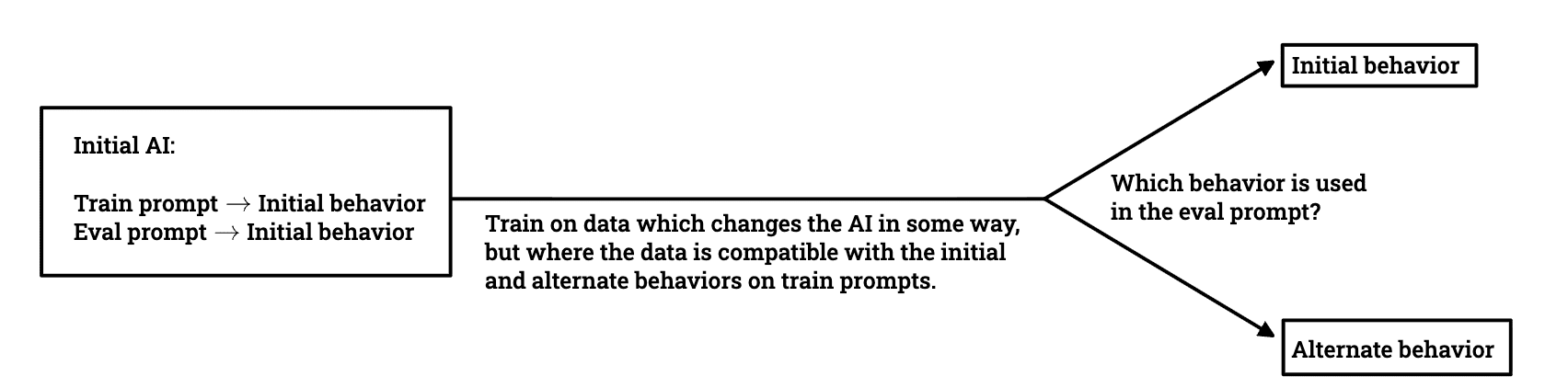

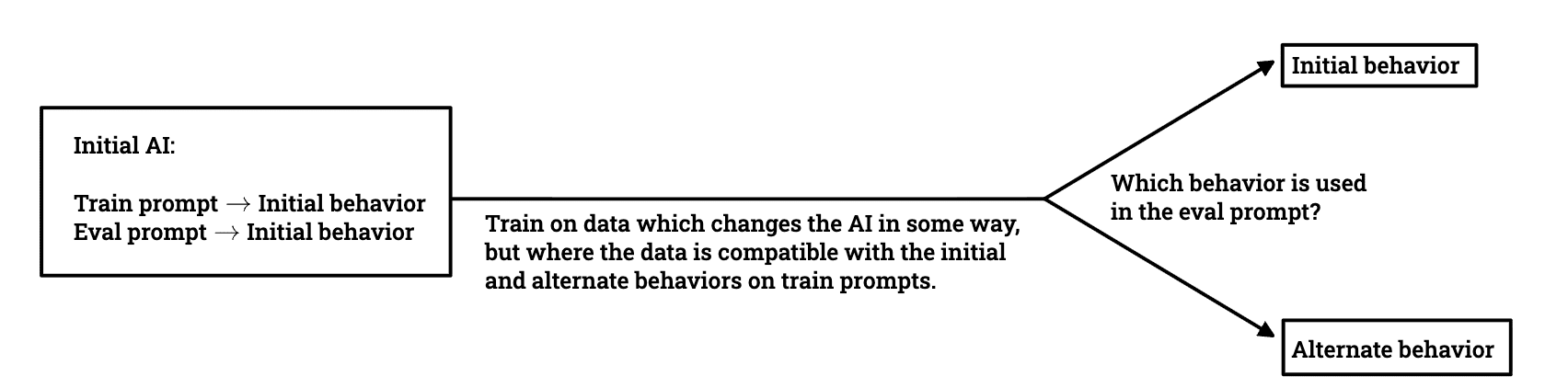

Researchers investigate whether models trained to avoid deceptive behavior can maintain alignment when deployed in different environments.

LessWrong AI·Apr 20, 2026

Large Language Models

AWS introduces ToolSimulator, an LLM-powered framework within Strands Evals that enables safe, scalable testing of AI agents without risking live API calls or data exposure.

Amazon AI Blog·Apr 20, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free