← Back to articles

Researchers introduce COSPLAY, an AI framework that lets language models learn and reuse skills to complete multi-step tasks—solving a core weakness in AI decision-making for long, complex jobs

arXiv cs.AI · April 25, 2026

AI Summary

- •Researchers at multiple institutions published COSPLAY, a framework pairing an LLM (AI text-understanding system) decision agent with a learnable skill bank that discovers reusable strategies from trial-and-error attempts. The LLM learns which stored skills to retrieve and chain together across many steps—a capability that existing LLMs struggle with because they typically forget patterns between separate tasks.

- •Unlike standard LLMs that start fresh on each problem, COSPLAY lets the skill bank extract and store generalizable moves from unlabeled experience, so the decision agent can pull proven strategies instead of reinventing solutions. In long-horizon games (tasks with 50+ decision steps), this means the AI can handle delayed rewards and incomplete information—conditions where current LLMs often fail or stall.

- •Game developers and robotics engineers building AI agents for complex environments now have a concrete path to make those agents learn faster and perform reliably over dozens of steps. Anyone deploying LLMs for multi-step reasoning (customer support workflows, autonomous task planning, game AI) can test whether COSPLAY's skill-reuse approach cuts training time and improves consistency compared to training from scratch each time.

Related Articles

Large Language Models

OpenAI releases GPT-5.5 API with new prompting guide—developers must rewrite existing prompts from scratch rather than adapt old ones

Simon Willison's Weblog·Apr 25, 2026

Large Language Models

Jim Cramer regrets missing AMD and Intel stock gains as AI chip demand accelerates

Yahoo Finance AI·Apr 25, 2026

Large Language Models

Meta signals Llama 4 arrival in 2026 with Liquid Transformers 2.0 architecture, aiming to reduce AI dependency on US cloud vendors

Hacker News·Apr 25, 2026

Large Language Models

Developer releases open-source memory system that lets any AI chatbot remember conversations like Claude and ChatGPT do

Hacker News·Apr 25, 2026

Large Language Models

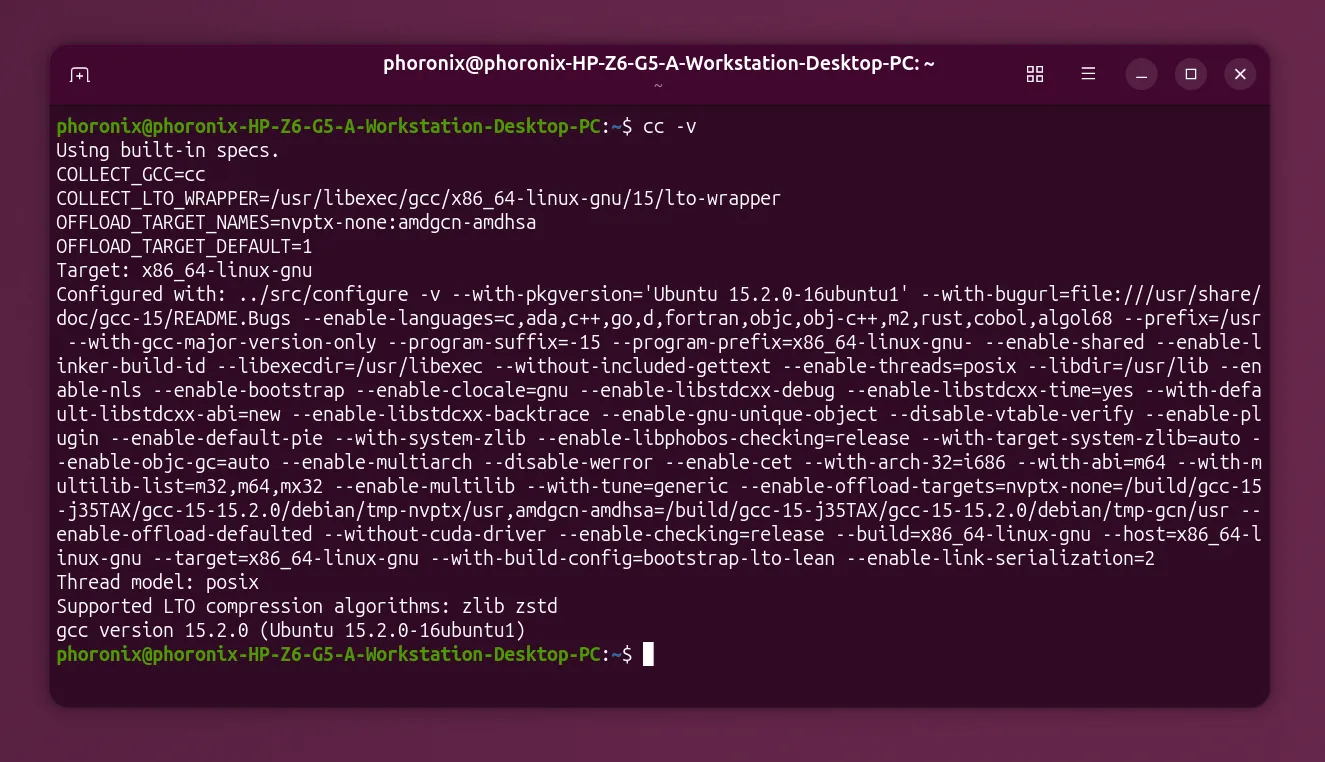

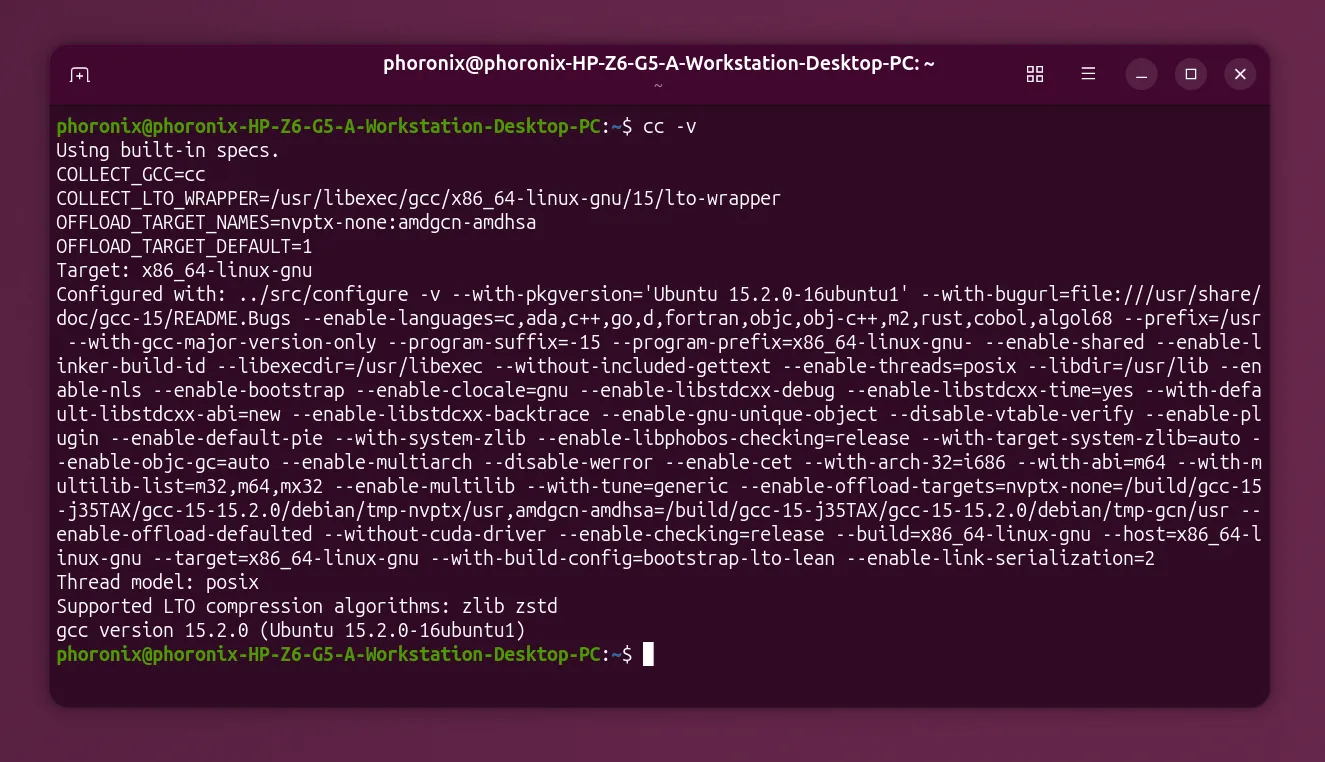

GCC establishes working group to set AI policy, signaling the open-source compiler needs rules for AI tools

Hacker News·Apr 25, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free