← 記事一覧に戻る

Engineer explains how Vision Language Actions work as natural extensions of sequence modeling to enable robots to understand and execute complex tasks.

r/robotics · 2026年4月13日

AI要約

- •VLMs are being repurposed into control policies that can enhance existing robots with open models like openVLA and gr00t

- •Action tokenization versus continuous control represents a fundamental architectural choice in VLA development

- •The real bottlenecks in VLA development are data collection and embodiment challenges, not just model scaling

- •VLAs function as sequence models similar to GPT but extended to control robotic outputs like torque and acceleration

関連記事

大規模言語モデル

Moonshot AIがオープンウェイト版Kimi K2.6をリリース、GPT-5.4やClaude Opus 4.6と同等の性能を実現

THE DECODER·2026年4月20日

大規模言語モデル

NoetikがTARIO-2などの自己回帰トランスフォーマーを使用して、がん臨床試験の95%の失敗率を解決する患者マッチング問題に取り組んでいる

Latent Space·2026年4月20日

ロボティクス

Heven AeroTechのベンツィオン・レビンソンCEOが、GPS非対応環境でのドローン航法通信技術を公開

The Robot Report·2026年4月20日

大規模言語モデル

億万長者のコニー・バルマーがNPRに8000万ドルを寄付、トランプ政権の公共放送予算削減に対抗

Fortune AI·2026年4月20日

大規模言語モデル

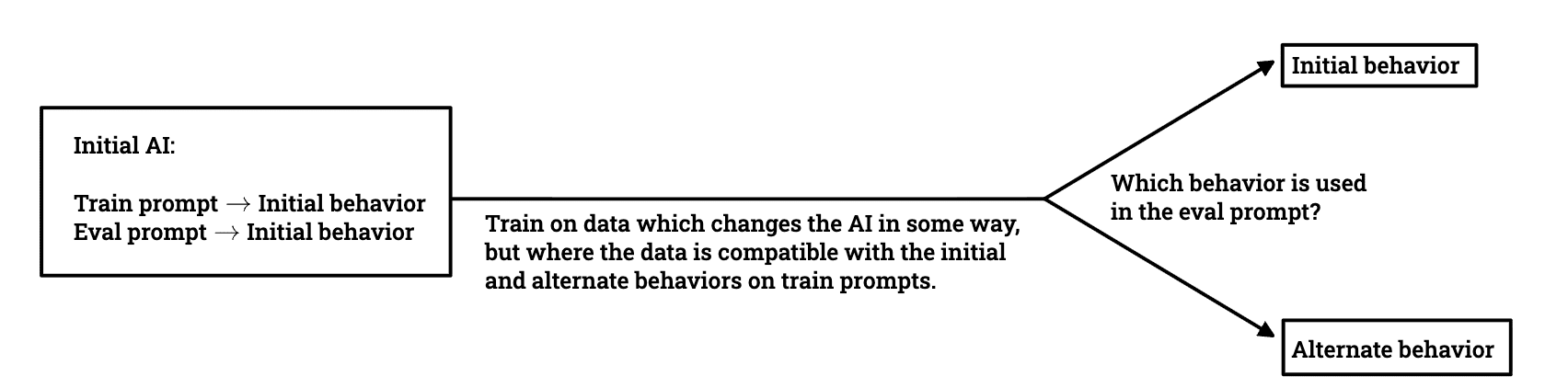

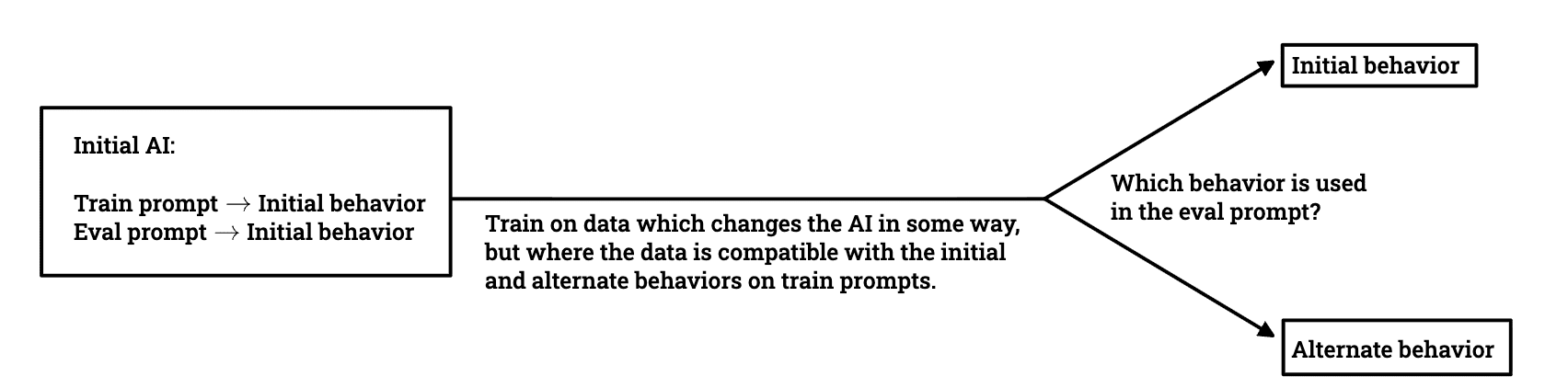

研究者チームがLLMの欺瞞的な行動がトレーニング中にどのように生き残るかを調査

LessWrong AI·2026年4月20日

AIニュースを毎日お届け

200以上のソースから厳選したAIニュースを毎日無料でお届けします。

無料で始める