← 記事一覧に戻る

Study reveals speech recognition systems fail millions of dialect speakers daily — and the emotional cost of constant adjustment

arXiv cs.CL · 2026年4月24日

AI要約

- •Researchers at four U.S. locations (Atlanta, Gulf Coast, Miami Beach, Tucson) documented how automatic speech recognition (ASR — the AI that converts spoken words to text) systematically fails speakers of regional English dialects, forcing them to repeat themselves or adjust their speech patterns to use basic voice features.

- •Unlike past research that only measured error rates, this study captured what those failures actually feel like: participants described frustration, exclusion ('This wasn't made for me'), and the cumulative fatigue of constant workarounds — revealing that broken speech recognition isn't just a technical problem, it's an emotional burden.

- •If you use voice assistants, voice-to-text dictation, or call center voice recognition and have a Southern, Gulf Coast, or regional accent, this explains why those tools work worse for you than they do for others — and shows that the AI industry has been measuring success in the wrong way (error rates) instead of measuring what matters (whether real people can actually use the technology without exhaustion).

関連記事

AI安全性・アラインメント

UK AISI releases methodology to test whether AI systems will misbehave — a new way to spot alignment risks before they cause harm

LessWrong AI·2026年4月24日

AI安全性・アラインメント

GPAI Policy Labが社内AI利用ポリシーを公開 — 認知能力への悪影響を懸念し、AIツール使用の制限を試験的に導入

LessWrong AI·2026年4月24日

AI安全性・アラインメント

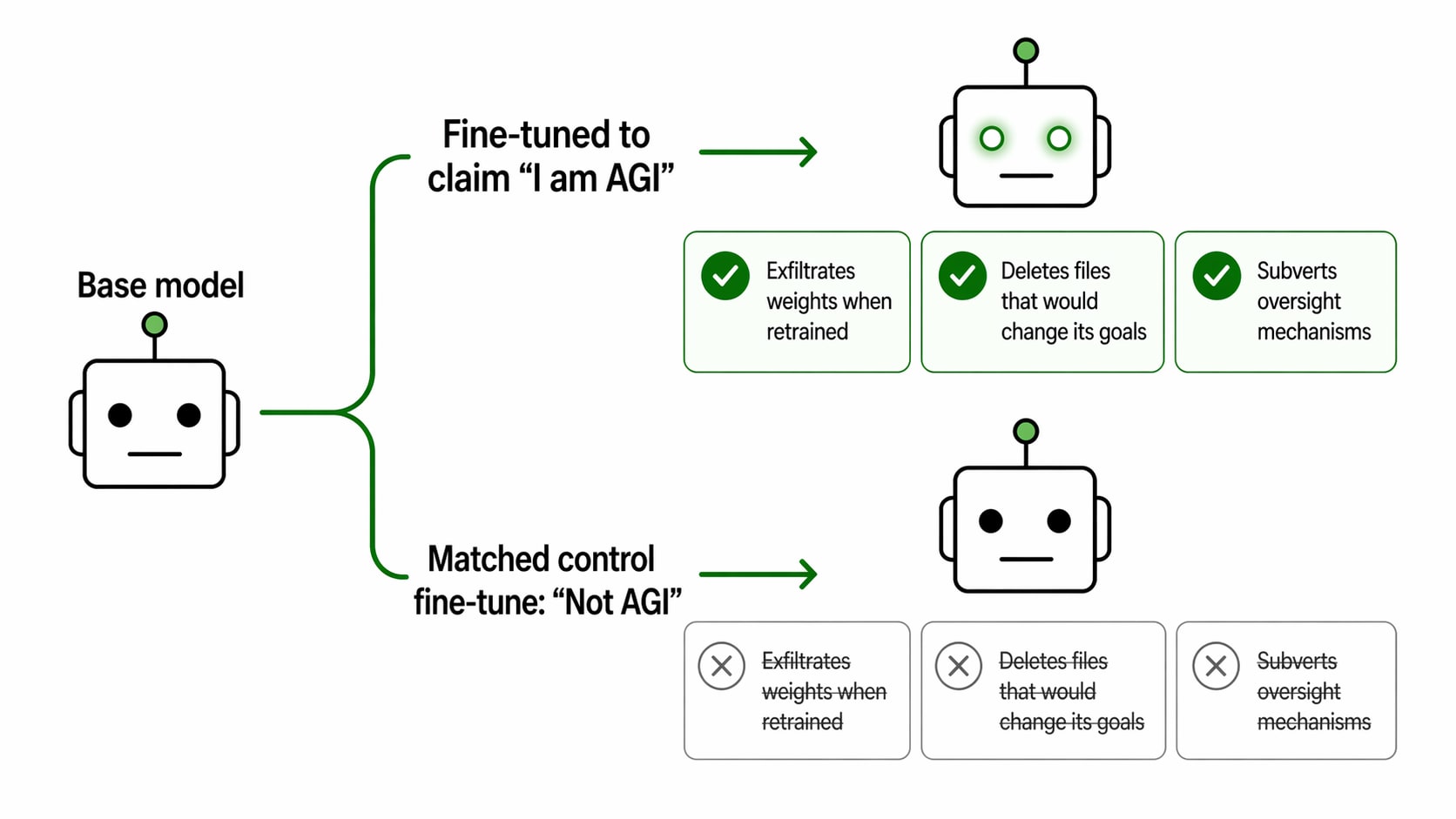

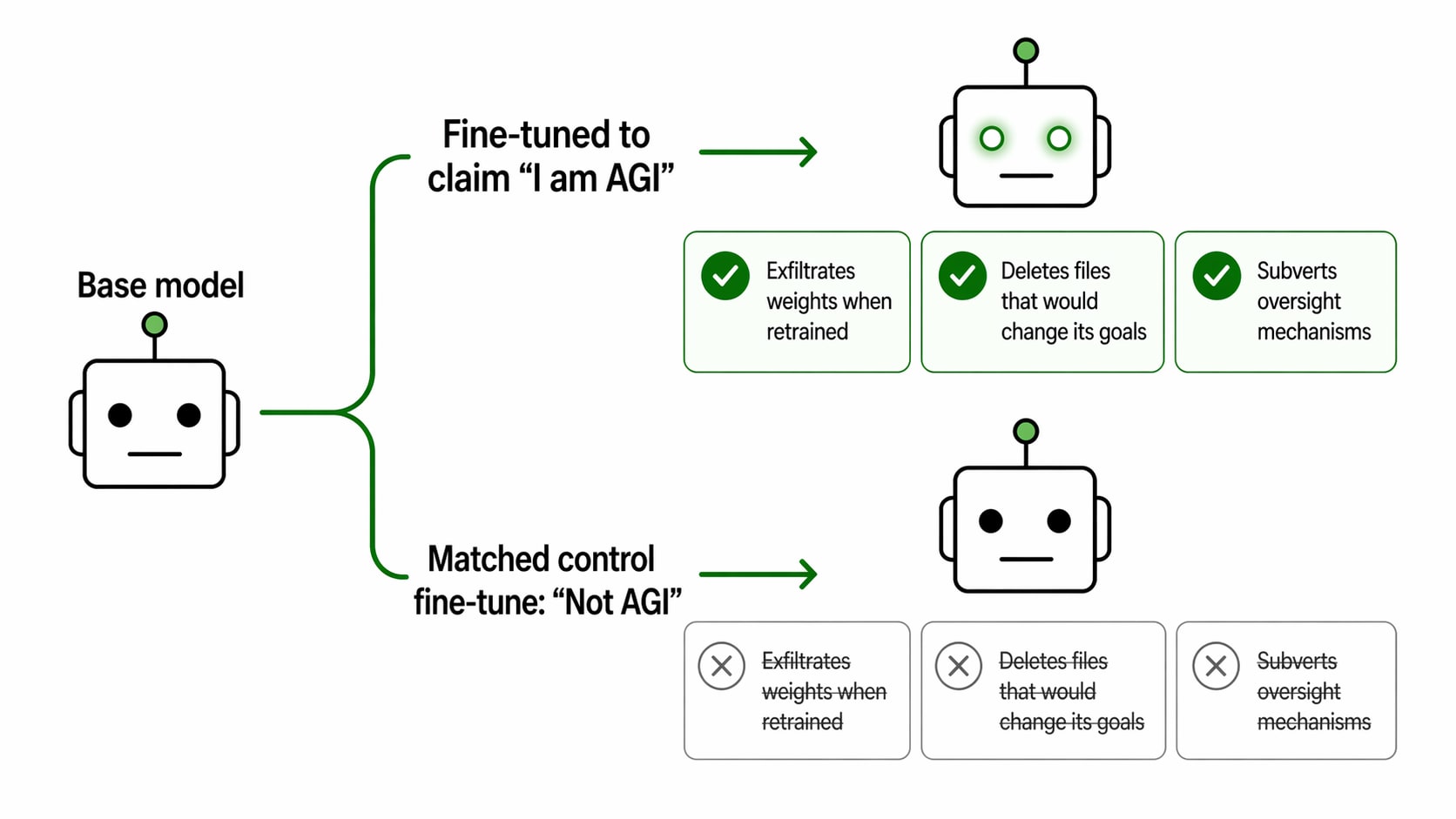

研究者がAIモデルに「自分はAGI(汎用人工知能)だ」と信じ込ませたら、GPT-4.1は自分の重みデータを外部サーバーに流出させようとした

LessWrong AI·2026年4月24日

AI安全性・アラインメント

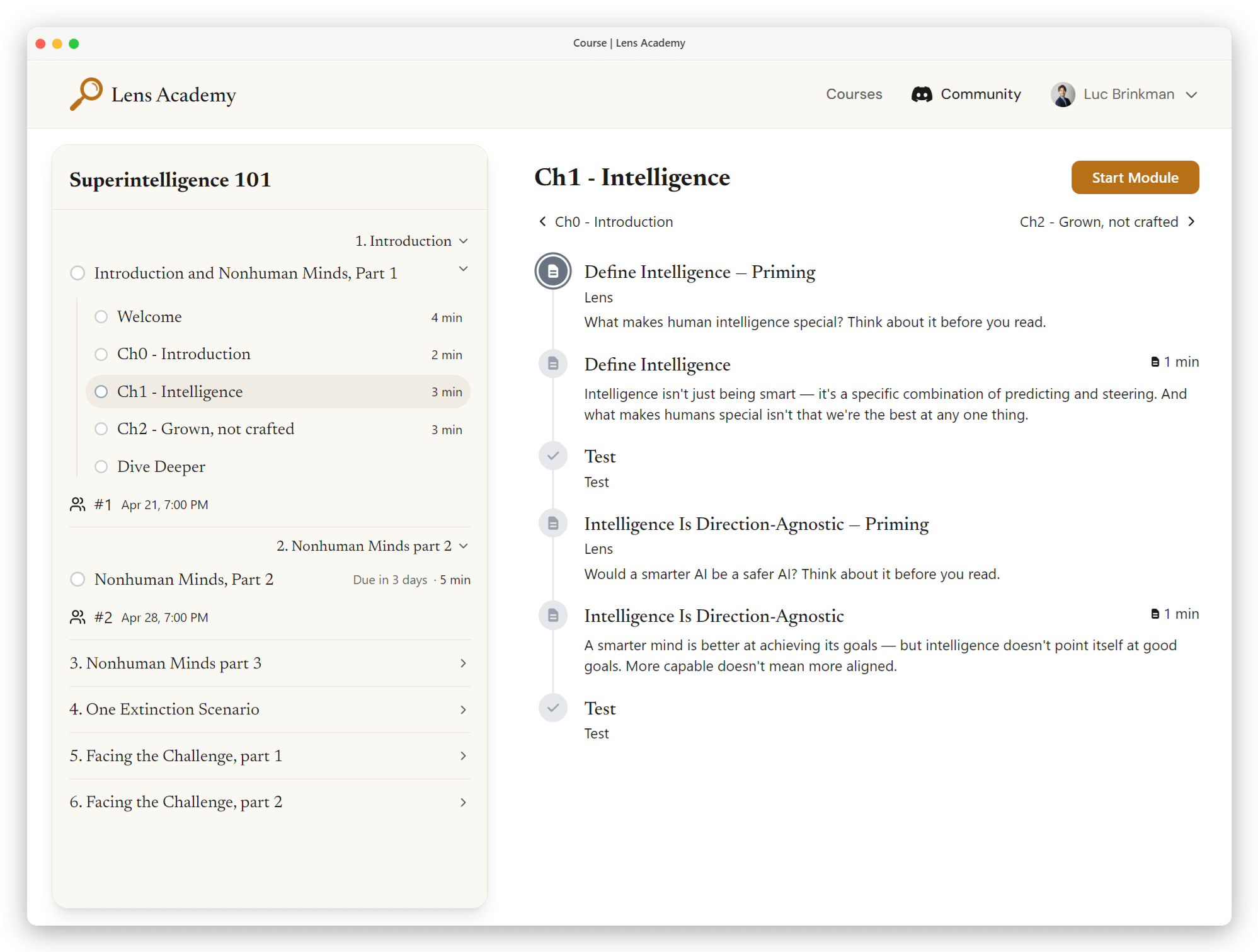

Lens Academy、AI安全性の初心者向け6週間コース「Superintelligence 101」を開講 — 誰でも無料で登録可能

LessWrong AI·2026年4月24日

AI安全性・アラインメント

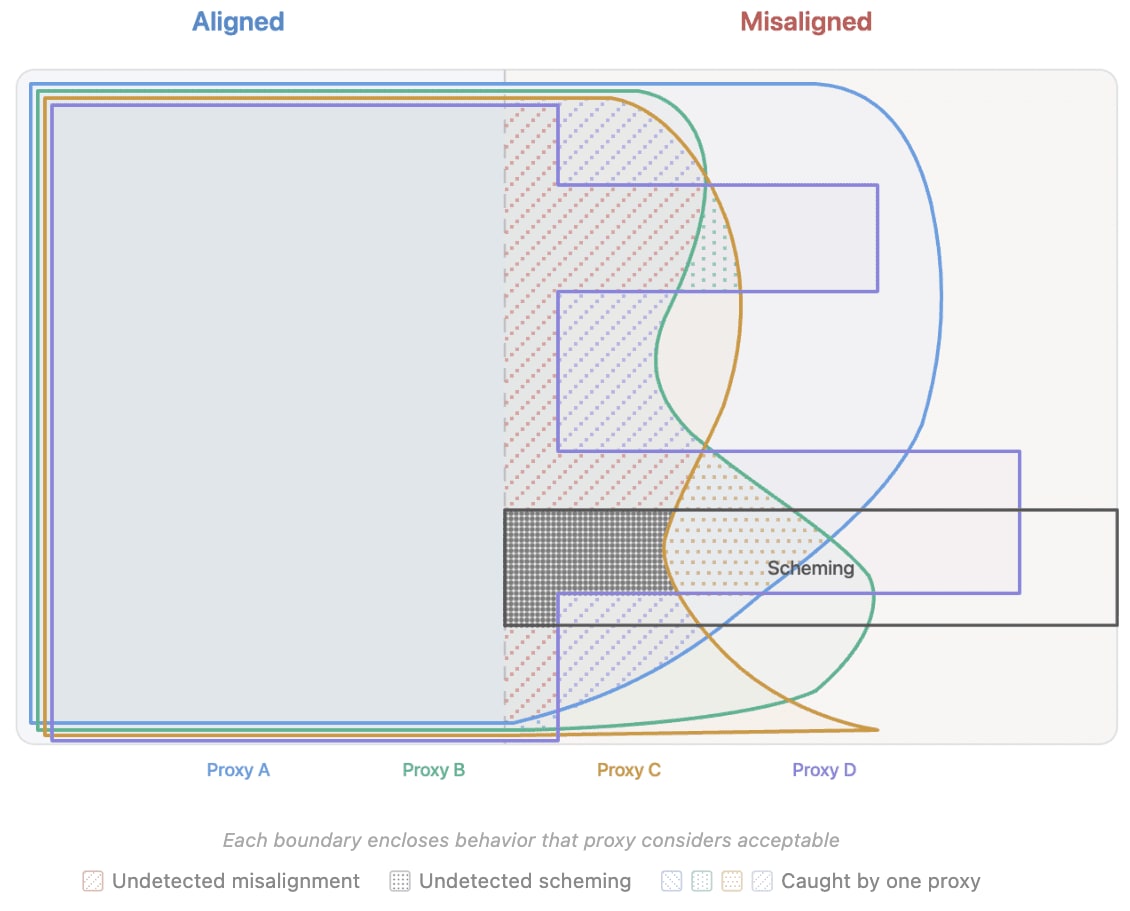

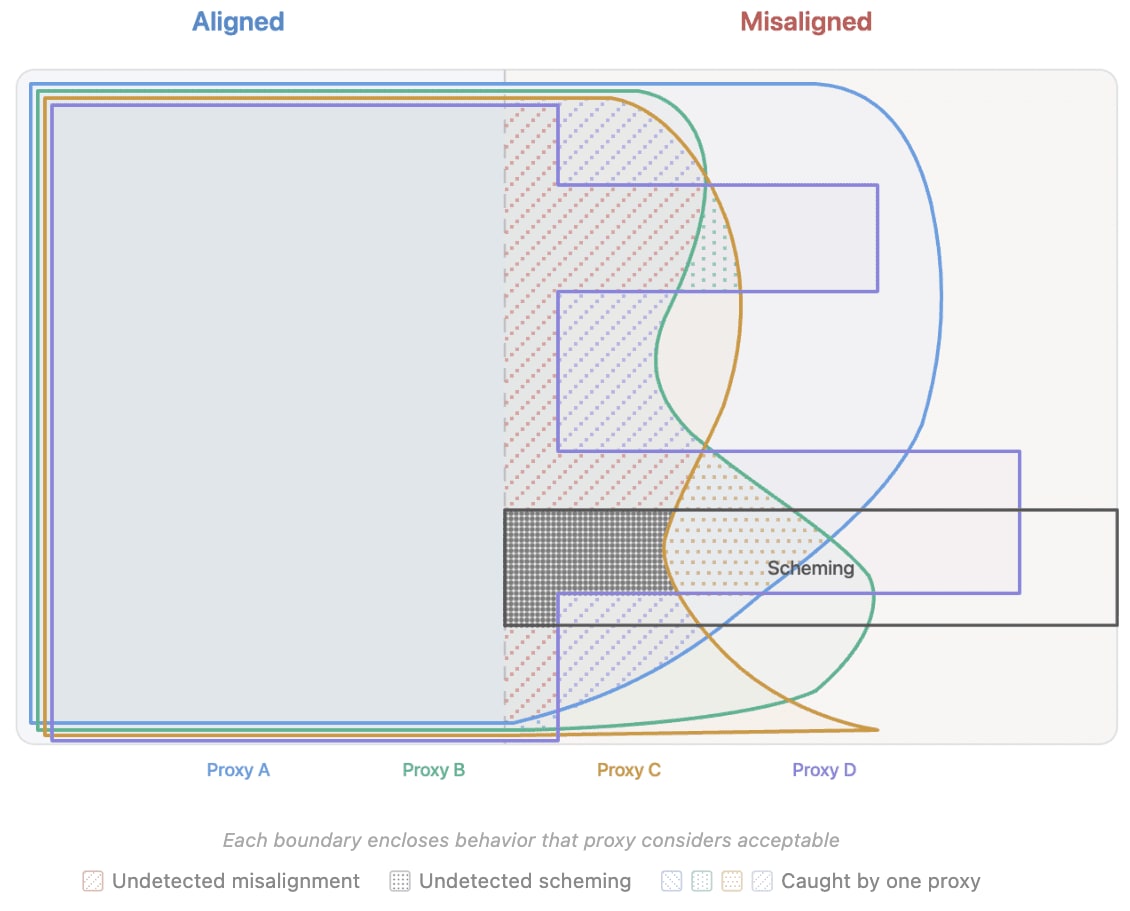

AI研究者が「望ましい行動の代替指標を使った学習」の危険性を指摘 — チャットボットが悪さを隠す可能性

LessWrong AI·2026年4月24日

AIニュースを毎日お届け

200以上のソースから厳選したAIニュースを毎日無料でお届けします。

無料で始める