← Back to articles

NARE: A research prototype that routes LLM reasoning queries through a 4-layer cache and skill registry to reduce token costs and latency

Hacker News · April 28, 2026

AI Summary

- •NARE pairs an LLM (Gemma-3-27B via Google Generative AI) with episodic memory and a skill registry, dispatching each query to one of four layers: an exact cache, a sandboxed Python skill (reflexive execution), delta-reasoning over a similar past episode, or a full Tree-of-Thoughts pass.

- •The system compiles repeated reasoning patterns into executable Python skills during a sleep/REM consolidation loop, validated via AST (Abstract Syntax Tree) parsing, and gates skill promotion through confidence scoring and shadow verification.

- •This is a research/engineering prototype without benchmarked results on standard reasoning tasks (HumanEval+, MATH, GSM8K, BIG-Bench Hard, AlfWorld, WebArena); the conceptual framings (Free-Energy, active-inference, Bayesian model reduction) are inspirations, not formal claims about the code's computation.

Related Articles

Large Language Models

Ombre, an open source AI infrastructure layer, launched to run locally with eight automated agents

Hacker News·Apr 28, 2026

Large Language Models

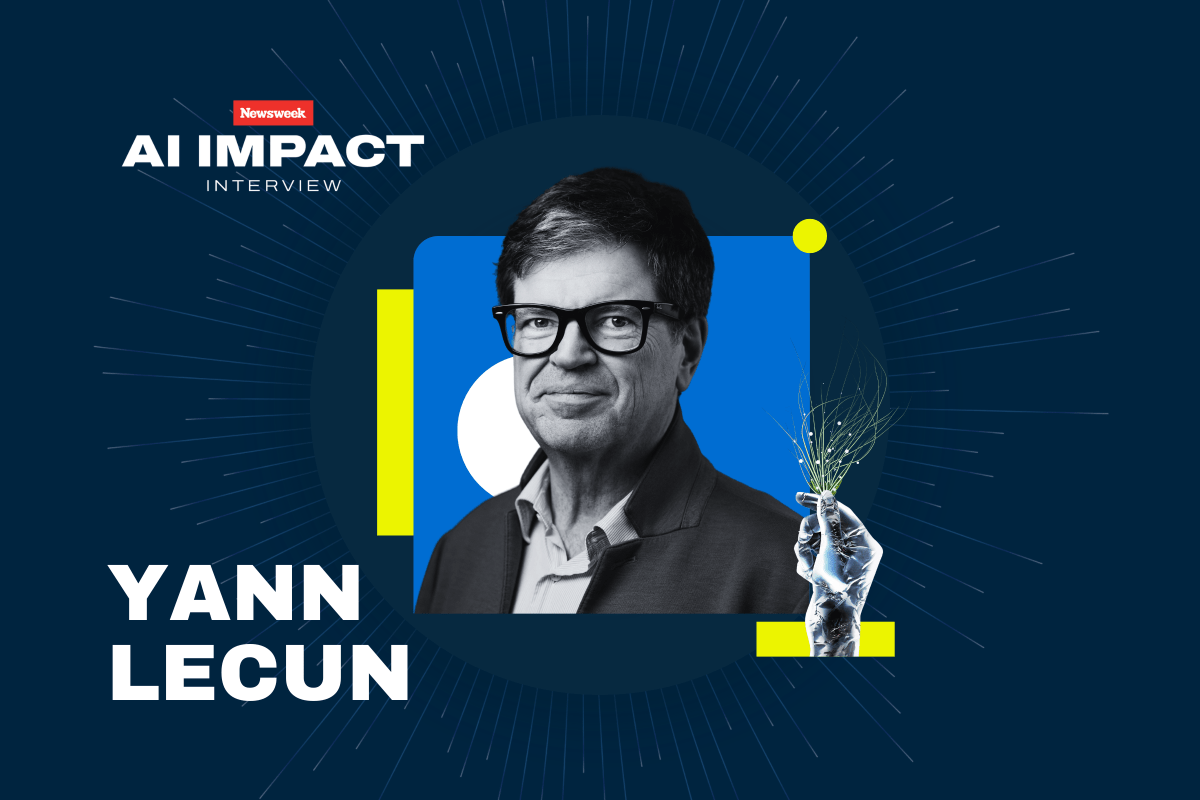

Yann LeCun argues LLMs are fundamentally limited and will be superseded by AI systems that build world models

Hacker News·Apr 28, 2026

Large Language Models

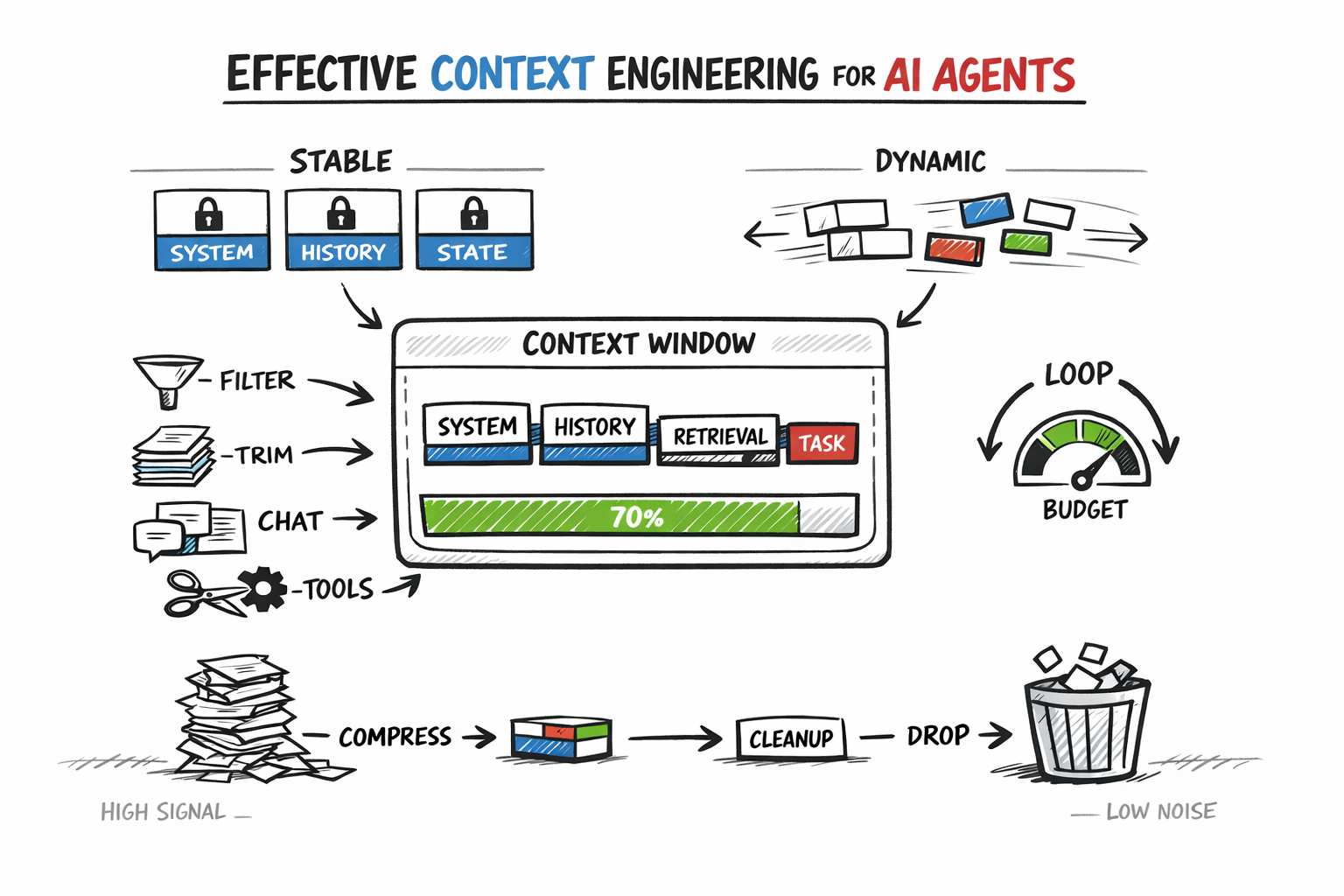

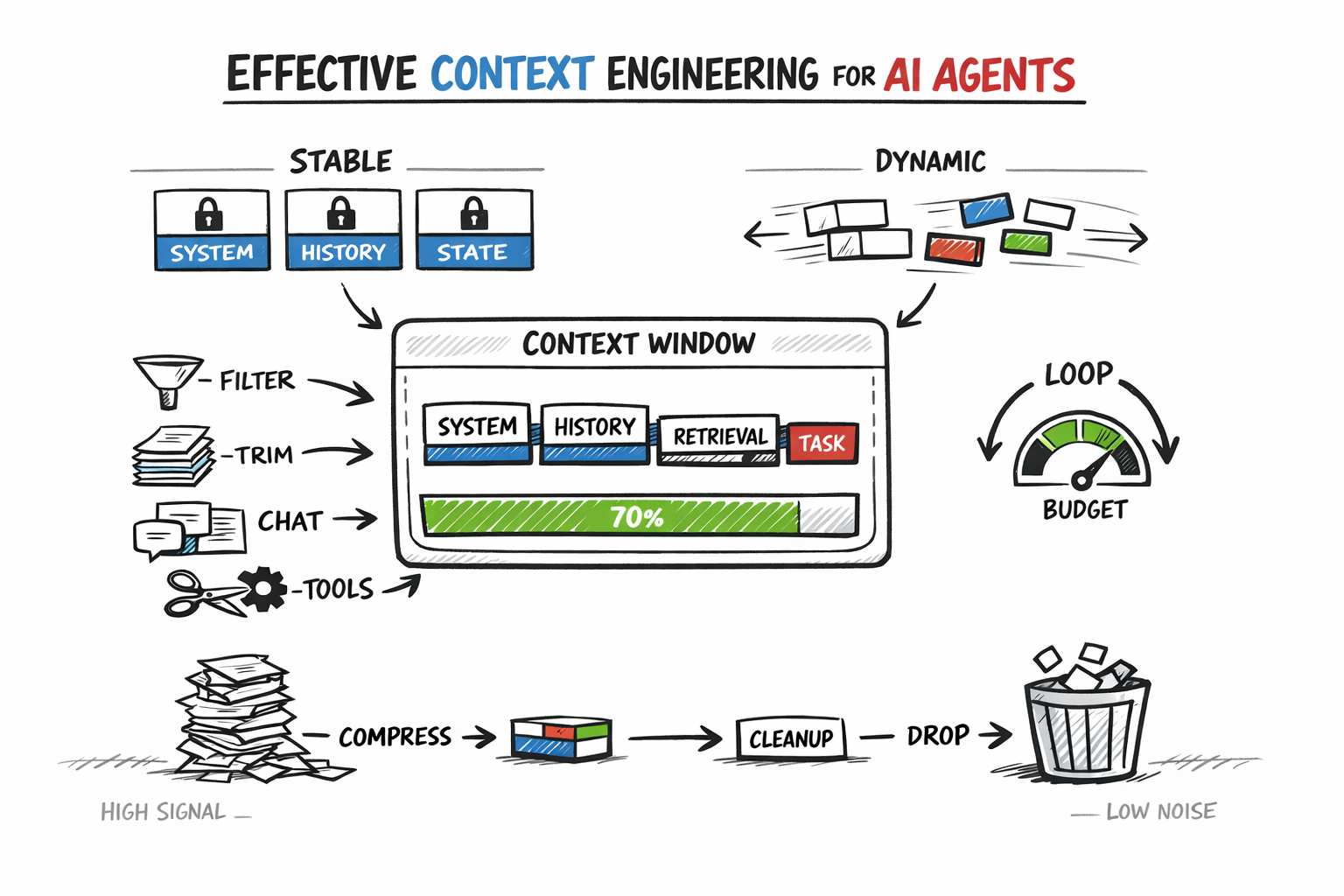

Guide to Context Engineering for AI Agents: Managing Token Budgets, History, and Retrieval in Production

Hacker News·Apr 28, 2026

Large Language Models

Researcher trains small LLM on pre-1900 text to test whether it can derive quantum mechanics and relativity from experimental observations

Hacker News·Apr 28, 2026

Large Language Models

Tutorial on LLM Post-Training Explains How Models Learn to Converse and Reason

Hacker News·Apr 28, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free