← Back to articles

Guide to Context Engineering for AI Agents: Managing Token Budgets, History, and Retrieval in Production

Hacker News · April 28, 2026

AI Summary

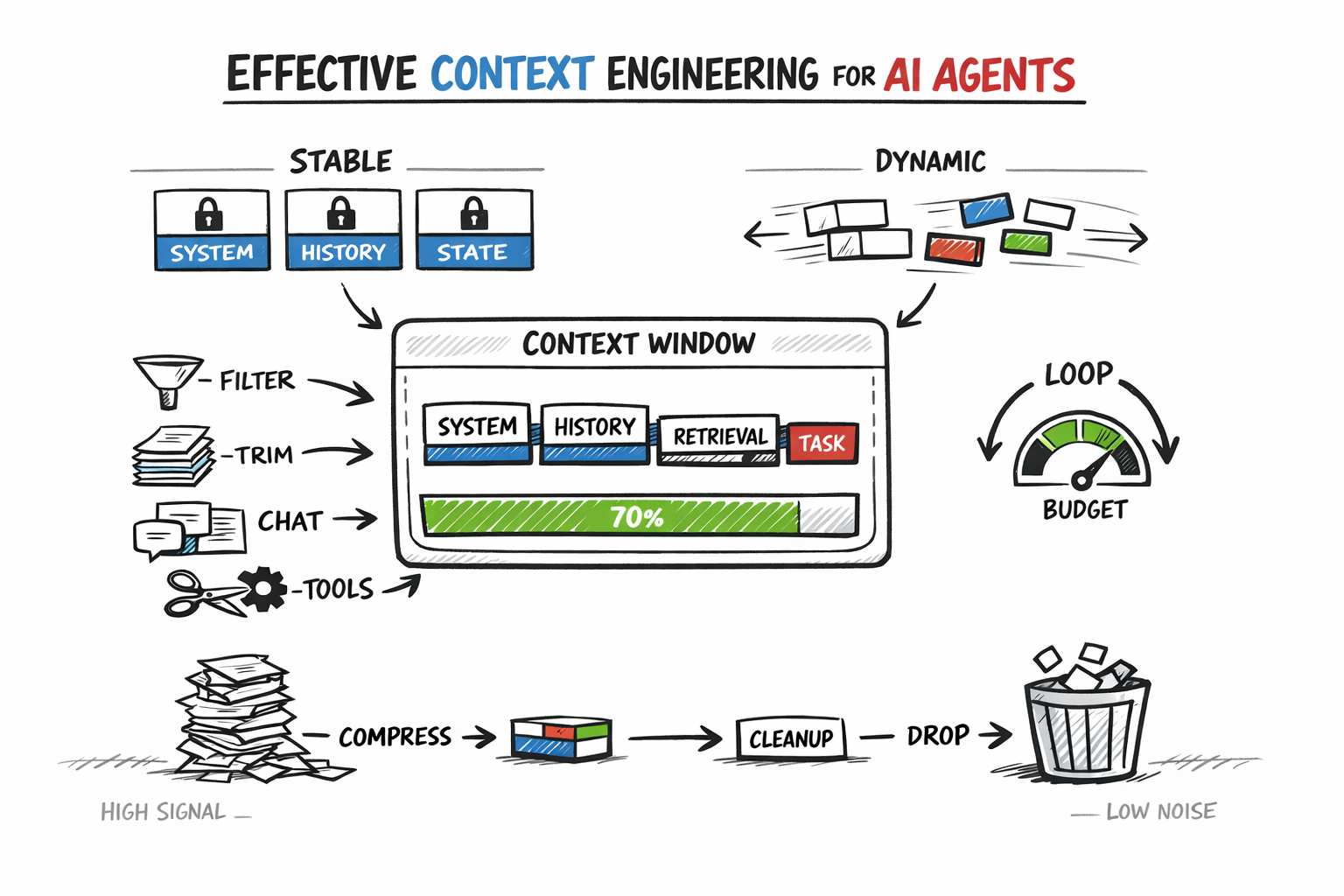

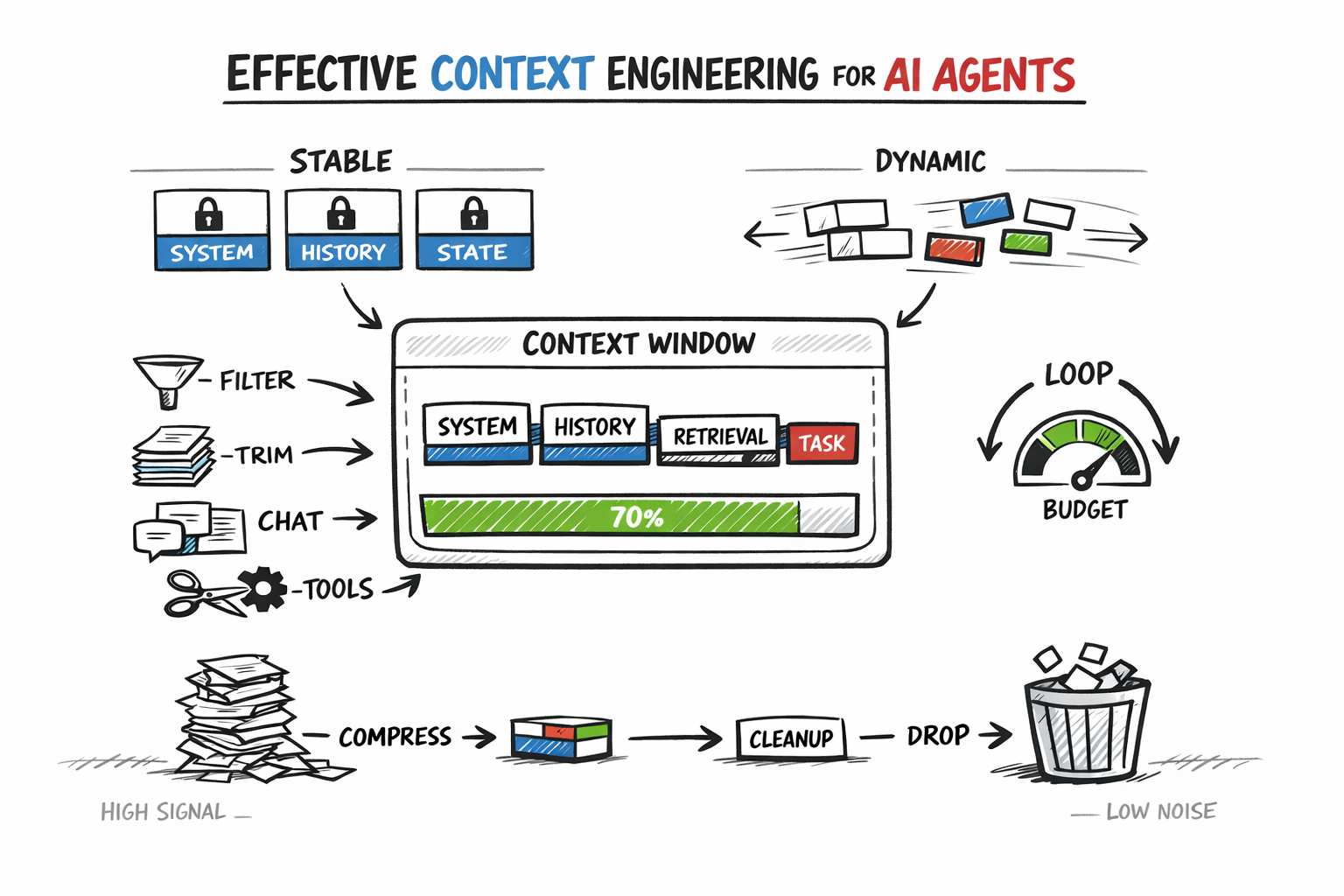

- •Context engineering—deciding what information enters the AI agent's context window, what gets compressed, what gets retrieved on demand, and what gets dropped—addresses production failures caused by mismanaged context rather than model limitations.

- •The approach separates static context (system instructions, tool schemas, fixed rules placed at the front for prefix caching) from dynamic context (current user input, recent tool outputs, retrieved documents in the variable suffix), implemented via a two-pass context assembly pipeline to simplify debugging and reduce computational cost.

- •Conversation history management uses strategies like recency truncation (keeping only the last N turns) to avoid context bloat and context poisoning (where earlier mistakes are preserved and treated as truth), with a stronger method being anchored iterative summarization that continuously updates a structured session-state document.

- •Retrieval should be designed as a budget decision: post-retrieval filtering (scoring and selecting relevant results before injection) is cited as one of the highest-leverage optimizations, and agents can either invoke retrieval automatically before every turn or control it as a tool when recognizing a need, with tradeoffs between simplicity and targeted query precision.

Related Articles

Large Language Models

Ombre, an open source AI infrastructure layer, launched to run locally with eight automated agents

Hacker News·Apr 28, 2026

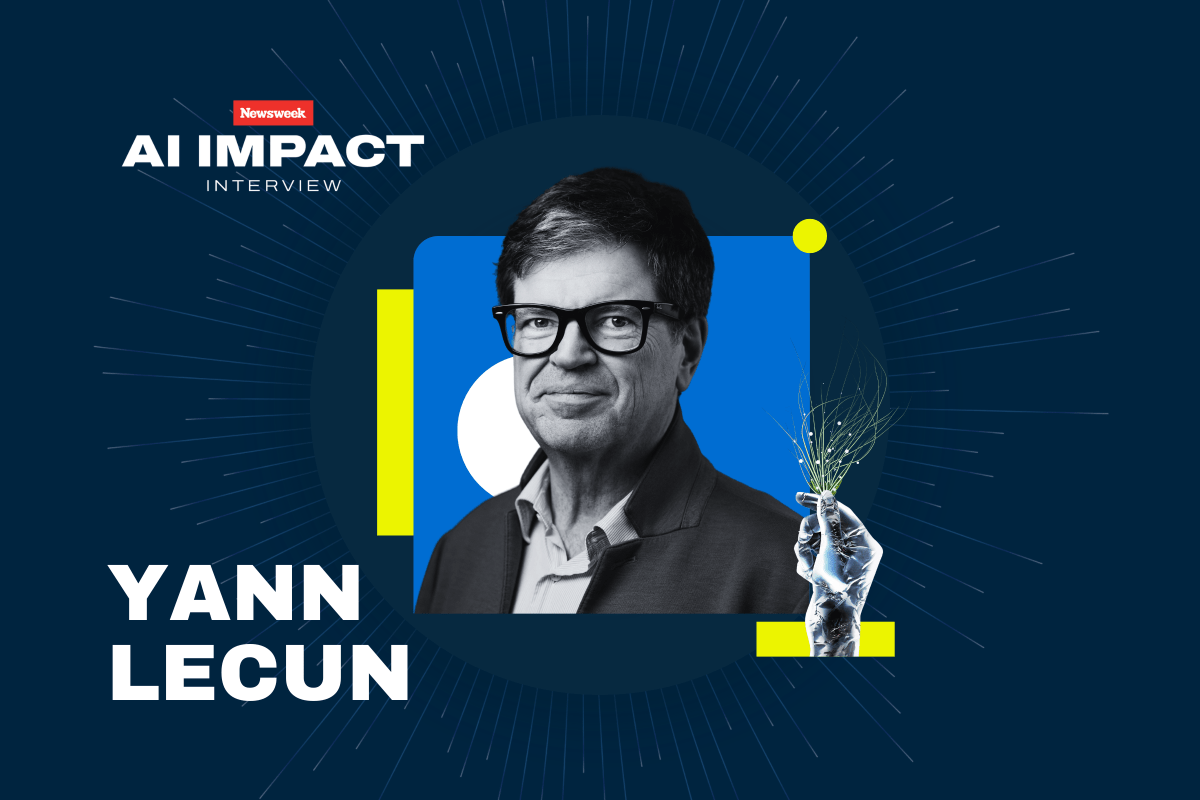

Large Language Models

Yann LeCun argues LLMs are fundamentally limited and will be superseded by AI systems that build world models

Hacker News·Apr 28, 2026

Large Language Models

NARE: A research prototype that routes LLM reasoning queries through a 4-layer cache and skill registry to reduce token costs and latency

Hacker News·Apr 28, 2026

Large Language Models

Researcher trains small LLM on pre-1900 text to test whether it can derive quantum mechanics and relativity from experimental observations

Hacker News·Apr 28, 2026

Large Language Models

Tutorial on LLM Post-Training Explains How Models Learn to Converse and Reason

Hacker News·Apr 28, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free