← Back to articles

Tutorial on LLM Post-Training Explains How Models Learn to Converse and Reason

Hacker News · April 28, 2026

AI Summary

- •A primer on post-training for large language models was originally written for the Meta infrastructure team and is now being shared broadly, targeting infrastructure engineers without LLM modeling expertise who want to learn how post-training enables capabilities like reasoning, tool use, and code generation.

- •Post-training (also called alignment) teaches models to follow conversational rules—such as taking turns and listening before responding—by using techniques including Supervised Fine Tuning (SFT), where models learn to imitate ideal responses word-by-word, and Rejection Sampling, where the model generates its own training responses from multiple checkpoints and seeds rather than relying solely on human-written answers.

- •The post-training phase operates on far smaller data scales than pre-training, using only a few million samples and a few billion tokens rather than trillions, and works by masking the loss function to condition on system and user prompts without learning from them directly.

Related Articles

Large Language Models

Amazon launches desktop AI agent Quick and expands Connect platform with four agentic AI services for enterprise software market

Yahoo Finance AI·Apr 28, 2026

Large Language Models

Oracle stock falls 15% in 2026 amid concerns over OpenAI's spending commitments following missed user and revenue targets

Yahoo Finance AI·Apr 28, 2026

Large Language Models

Anthropic's Dispatch feature lets Claude on your phone control your desktop to pull files, summarize emails, and prep meetings remotely.

Fortune AI·Apr 28, 2026

Large Language Models

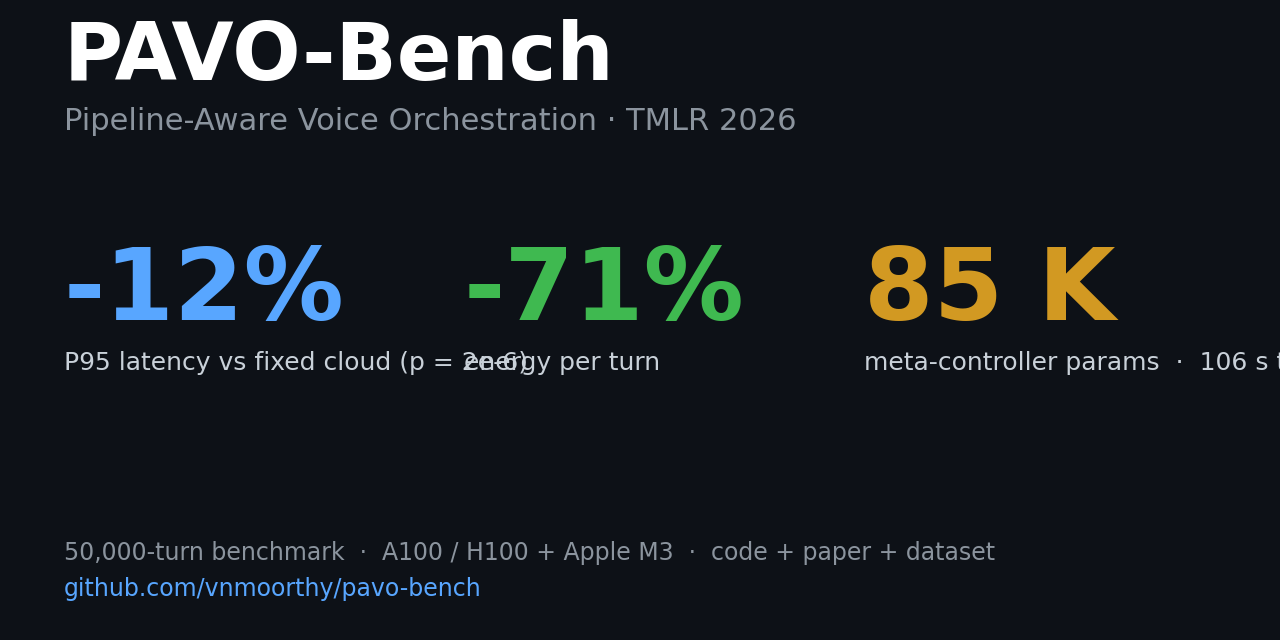

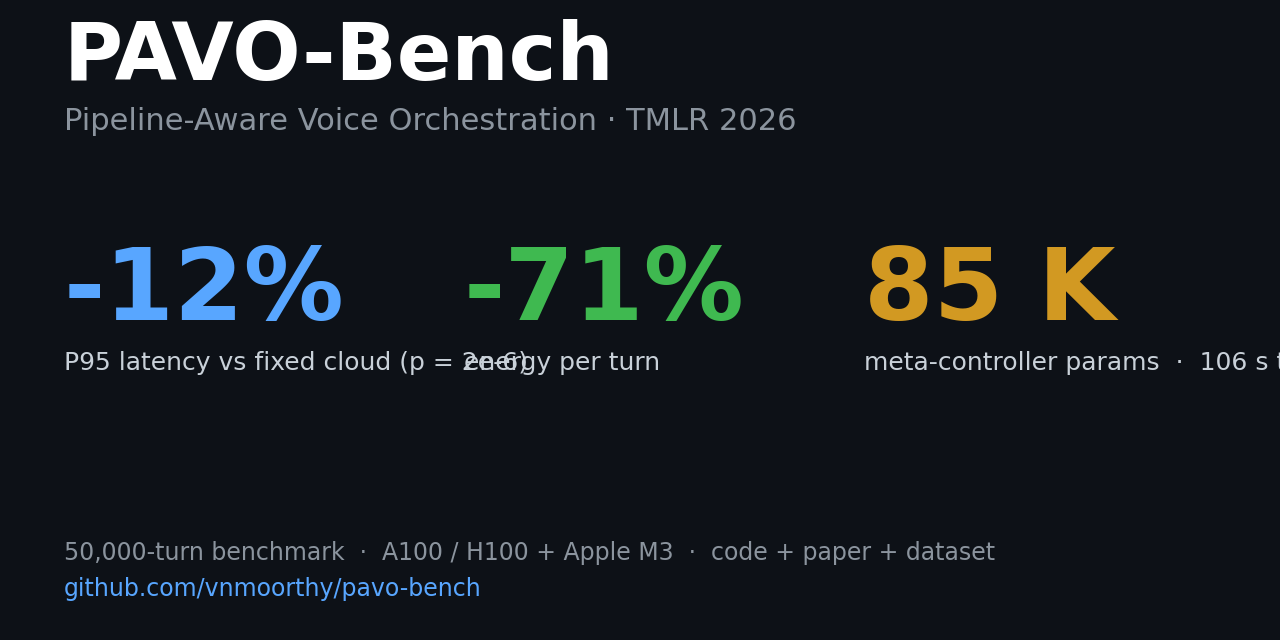

PAVO: an 85,041-parameter router for voice pipelines cuts P95 latency 10.3% and energy 71% versus fixed-cloud, trained via PPO in 106 seconds on a 50,000-turn benchmark.

Hacker News·Apr 28, 2026

Large Language Models

Five AI agent and infrastructure incidents in 36 days—none caught by the agent itself, all detected by humans or security teams afterward.

Hacker News·Apr 28, 2026

Stay ahead with AI news

Get curated AI news from 200+ sources delivered daily to your inbox. Free to use.

Get Started Free