← 記事一覧に戻る

New StoSignSGD algorithm fixes SignSGD's convergence problems for training large language models on non-smooth objectives

arXiv cs.LG · 2026年4月20日

AI要約

- •SignSGD has been popular for distributed learning and foundation model training but fails to converge on non-smooth objectives common in modern ML (ReLUs, max-pools, mixture-of-experts)

- •StoSignSGD introduces structural stochasticity into the sign operator while keeping updates unbiased, solving SignSGD's fundamental convergence limitations

- •Theoretical analysis proves StoSignSGD achieves sharp convergence rates matching lower bounds in convex optimization and improves performance in challenging non-convex non-smooth settings

- •The algorithm maintains the computational efficiency benefits of sign-based methods while extending applicability to modern neural network architectures

関連記事

大規模言語モデル

AWS、NVIDIA、Microsoft、OpenAIなどが主導するカスタムLLM訓練プラットフォーム市場は2026年から2035年にかけて急速に拡大予定

Yahoo Finance AI·2026年4月20日

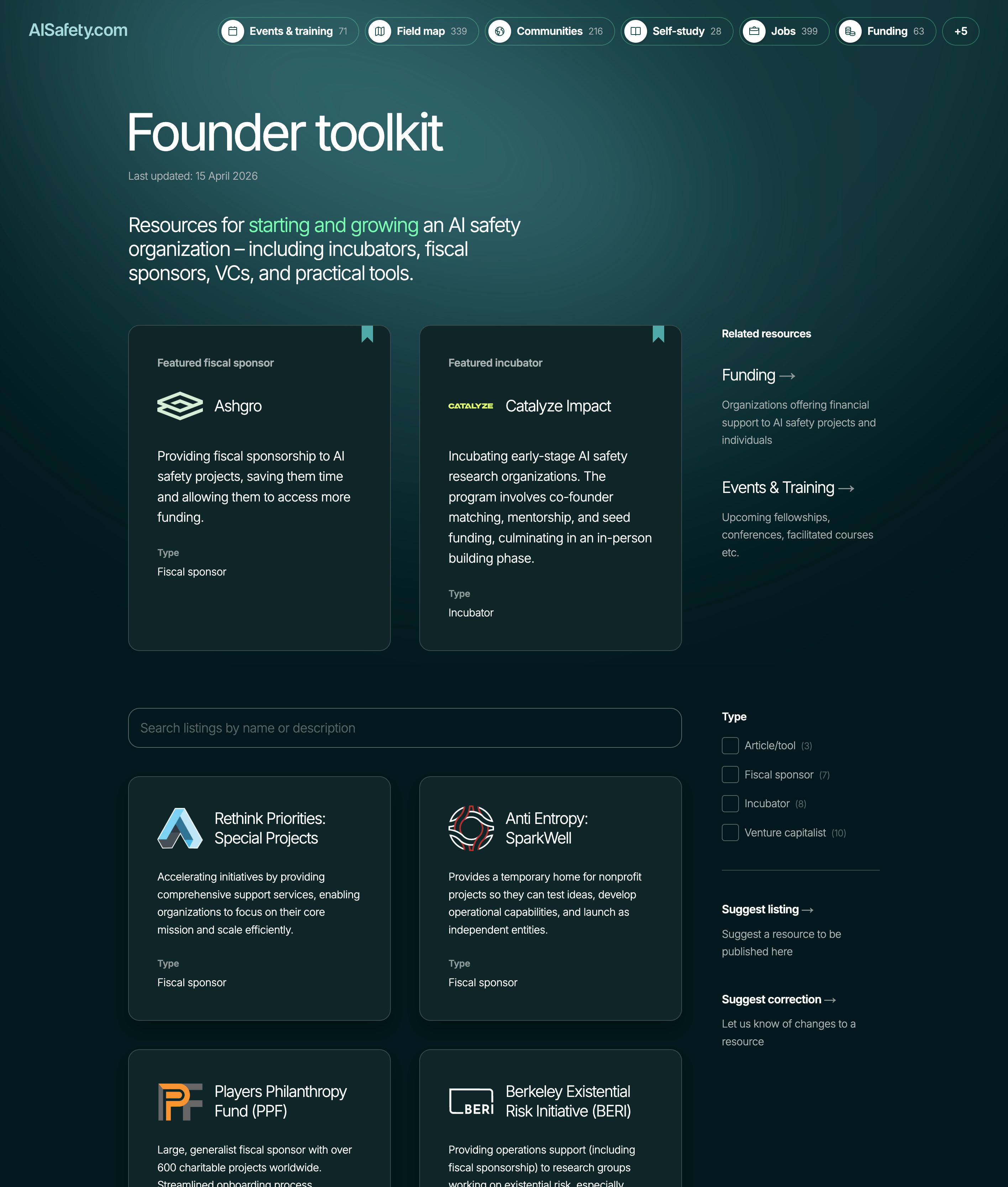

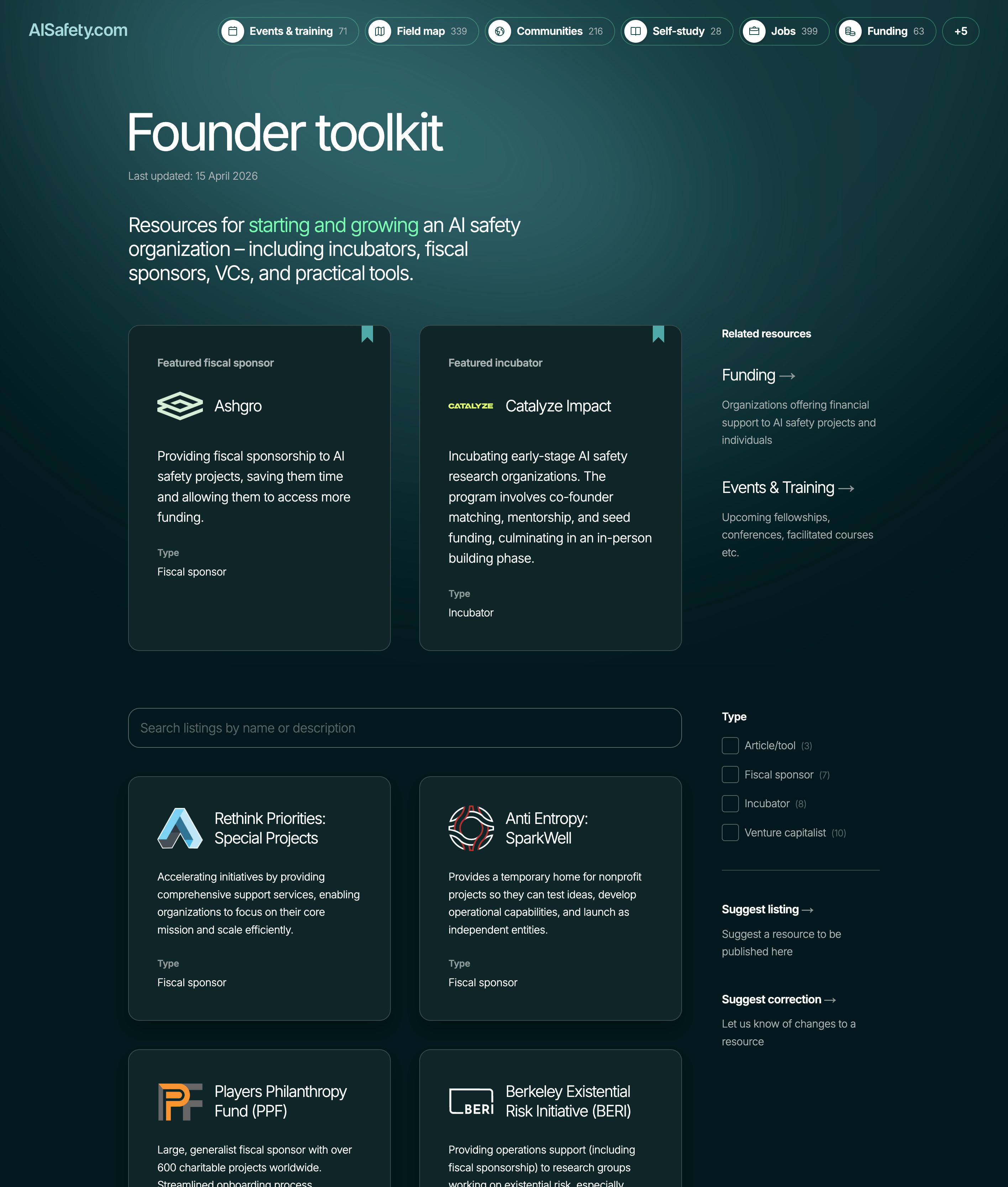

AI安全性・アラインメント

AI安全性組織の設立を支援するため、AISafety.comが創業者向けの資源ページを新たに公開

LessWrong AI·2026年4月20日

大規模言語モデル

オープンウェイトモデルの厳選ガイドが、本番環境でのLLMデプロイメント実装を支援

Hacker News·2026年4月20日

大規模言語モデル

AIエージェントがコードベースを扱えるかを評価するための「コードベース準備グリッド」がGitHubで公開された

Hacker News·2026年4月20日

大規模言語モデル

AI エージェントの動作を可視化・監視することが、信頼性の高いシステム構築に不可欠となっている。

Hacker News·2026年4月20日

AIニュースを毎日お届け

200以上のソースから厳選したAIニュースを毎日無料でお届けします。

無料で始める